Overview of Experiments

We conducted two assumption tests to evaluate key hypotheses about our app.

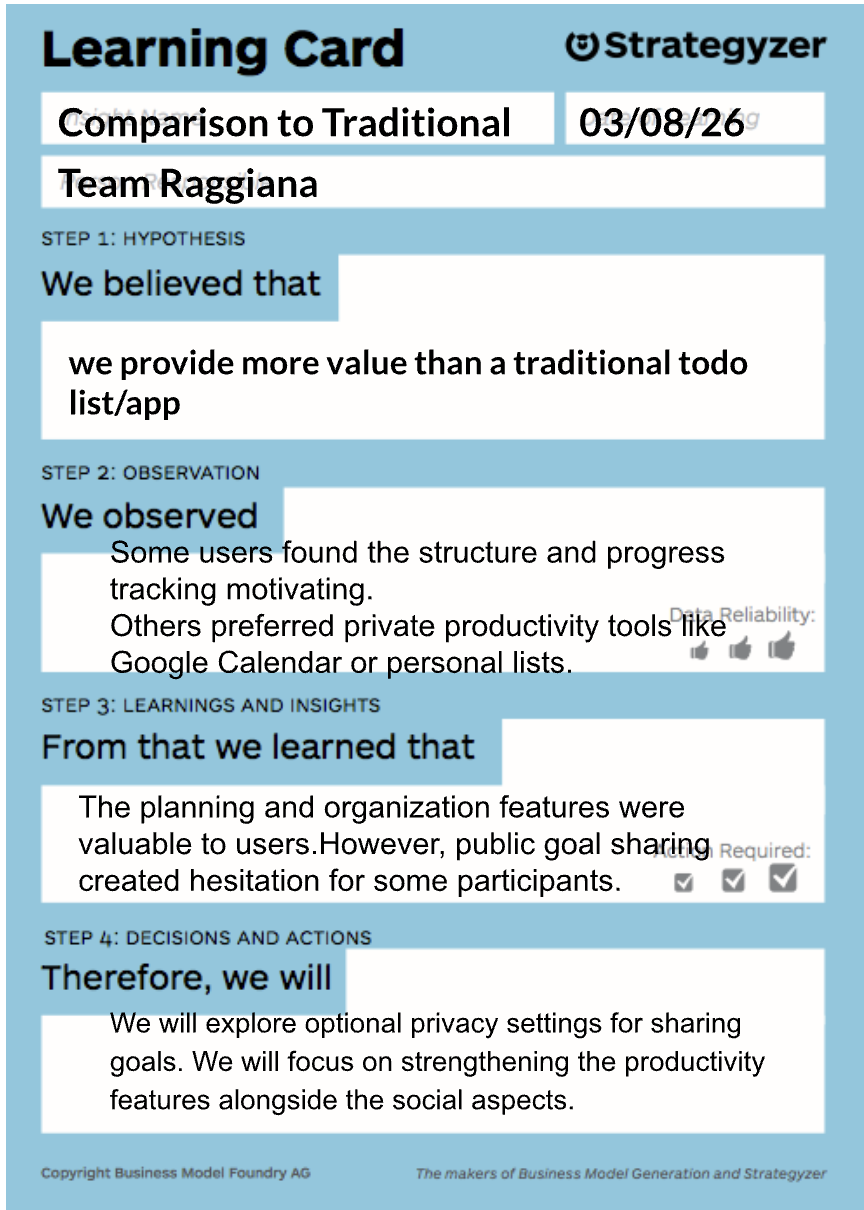

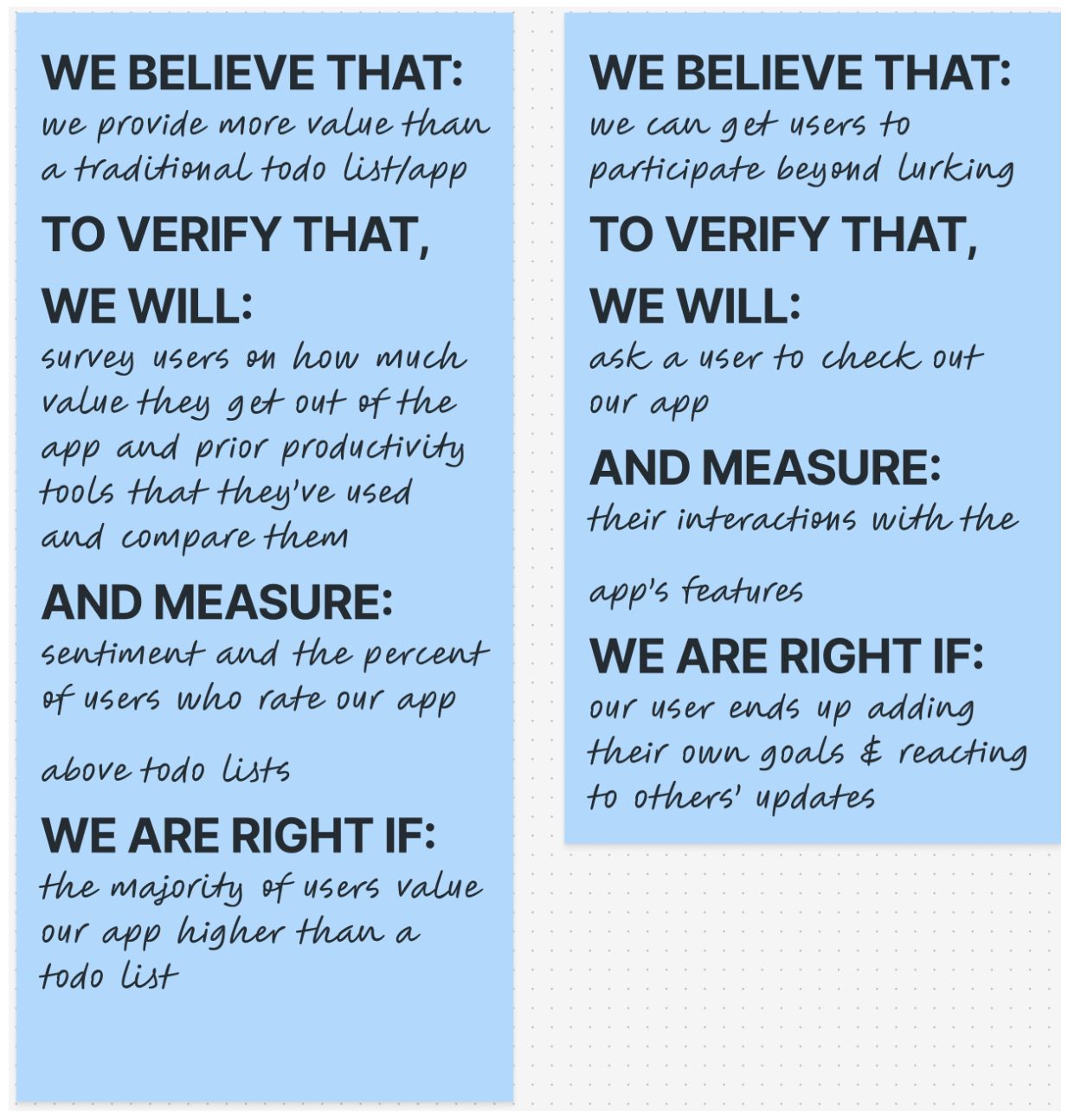

Experiment 1: Value Compared to Traditional To-Do Lists

We believed that our app provides more value than a traditional to-do list because it adds social accountability and shared progress tracking. To test this, we asked participants about their current productivity tools and compared their experiences using our app versus their usual task management methods. We measured perceived usefulness and user sentiment.

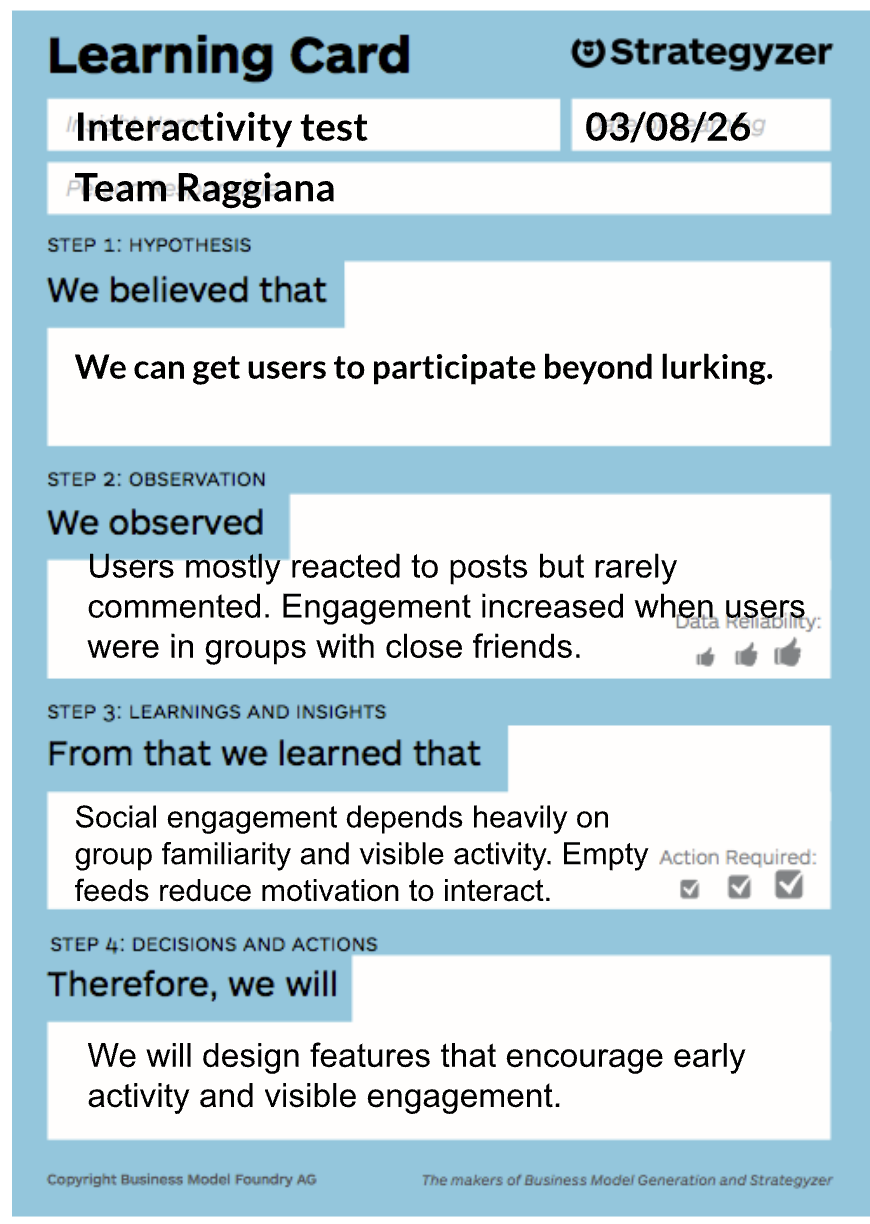

Experiment 2: Participation Beyond Lurking

We believed that users would actively interact with others in the app, rather than simply observing. To test this, participants explored the app, created goals, and interacted with posts in a group. We observed their interactions with features such as posting goals, reacting to others’ updates, and commenting.

Detailed Experiment Design

Detailed experiment design and methodology can be found here:

https://docs.google.com/document/d/1XkDWblg7x19bZjpfLg0aRNcJHPQMwBDxMwO-KsbfAdE/edit?usp=sharing

Participants and Recruitment

We recruited a mix of returning participants and new participants to capture both experienced and first-time perspectives. Returning participants had previously interacted with our intervention study and were already familiar with the accountability concept behind our app. This allowed them to provide more grounded feedback about how Raggiana fits into their productivity workflow.

New participants had no prior exposure to the project, which helped us evaluate first impressions, usability clarity, and whether the value proposition was immediately understandable. By combining these perspectives, we were able to balance deeper contextual feedback with unbiased reactions to the interface and experience.

Participants

Malaya

Malaya was a returning participant from our earlier intervention study. Because she was already familiar with the idea of accountability-based productivity tools, she was able to focus on how Raggiana compared to the tools she currently uses, such as handwritten to-do lists and Canvas timelines. Her feedback helped us understand how the app could integrate with existing student productivity habits.

Naomi

Naomi was a new participant who had not interacted with the project before. Her testing session provided valuable insight into first-time navigation, onboarding clarity, and interface expectations. Because she approached the app with no prior context, she helped reveal usability friction points and feature confusion that returning users might overlook.

John

John was also a new participant who approached the app without prior knowledge of the concept. His testing highlighted differences in user expectations around goal sharing and social productivity features. He also surfaced several interface interaction ideas and edge cases that helped us think more critically about how users might interpret the app’s purpose.

Ashley Lopez

Ashley was recruited as a new participant who regularly manages tasks using tools like Google Calendar. Her feedback helped us understand how the social accountability aspect of the app might influence motivation when working alongside peers. She also provided perspective on how competitive or collaborative dynamics could encourage task completion.

Gulperi

Gulperi was a new participant who approached the app with a critical perspective on social goal sharing. Her feedback helped us surface deeper concerns about privacy, trust, and the social dynamics of sharing goals publicly. This perspective was particularly valuable because it challenged one of our core assumptions about the appeal of accountability-based productivity tools.

Artifacts and Experiment Report

Photos; https://drive.google.com/drive/folders/1S2INq_1MXmSwYH9WVU-ULw6l_NBLSR1o?usp=sharing

Detailed Assumption test report:

https://docs.google.com/document/d/1tTcEB_S-sezeBNp6hZJSAMtHatMd8E4Z8Df6y5ojBz0/edit?usp=drive_link