Introduction

Project Summary

Social plans fall apart constantly — not because people don’t care, but because the gap between intention and follow through with respect to plans is wider than most people realize. Stanford students routinely make plans with genuine enthusiasm, only to cancel last-minute, citing academic pressure, other commitments, or simply being “busy.” Our research revealed that flaking is not a character detect — it’s a systemic problem driven by plan vagueness, poor commitment mechanics, and a lack of accountability early on.

Sticki is an iMessage extension designed to help people make their plans actually happen. Targeting socially active Stanford students who want to be more reliable but struggle to follow through, our intervention combines three behavior change mechanisms: a scheduling assistant that detects vague plans in text threads and prompts users to lock in specifics; pre- and post-event check-ins that build mindfulness and meta-awareness around social commitments; and a dynamic FlakeScore that gives users a private, data-driven view of their own reliability over time. The core design decisions were to work within the messaging context users already inhabit to reduce context switching, and focusing on plan specificity as a driver of outcomes, and keeping scores private to avoid shaming. Taken together, Sticki’s multi-pronged approach works with the user to turn a hesitant “maybe” into a meaningful outcome.

Secondary Research

Literature Review

Our literature review was driven by two core questions: what factors drive people to commit to social plans, and what happens when those plans fall apart?

On the commitment side, we drew on Kapetaniou et al.’s game theory research, which found that people use costly signaling most when they have low confidence in the other person’s behavior (2023). Applied to social planning, this suggested that communication is a key part of resolving uncertainty around commitments, and that designing for clearer, earlier signals of intent could meaningfully reduce ambiguity between plan-makers.

On the cancellation side, two papers shaped our thinking. Majumder and Martuza found that people consistently overestimate the negative social impact of canceling plans, which suggests that some flaking causes outsize anxiety for actors (2025). Meanwhile, Caron et al. identified that timing and honesty are the most important factors in how cancellations are received: advance notice signals respect, while last-minute avoidance is perceived as a violation of social norms (2023). Together, these findings reframed flaking not as a character flaw but as a behavior shaped by concrete social norms that could be reinforced through design.

Most directly actionable was Gollwitzer’s implementation intentions research, which demonstrated that failures of follow-through are rarely due to weak motivation, but instead stem from breakdowns at the point of action initiation (1999). Specifying when, where, and how a behavior will occur dramatically increases follow-through by making the response automatic rather than involved.

Taken together, the literature pointed us toward an intervention focused on specificity, early commitment, and low-friction communication. Our full literature review can be found here.

Comparative Analysis

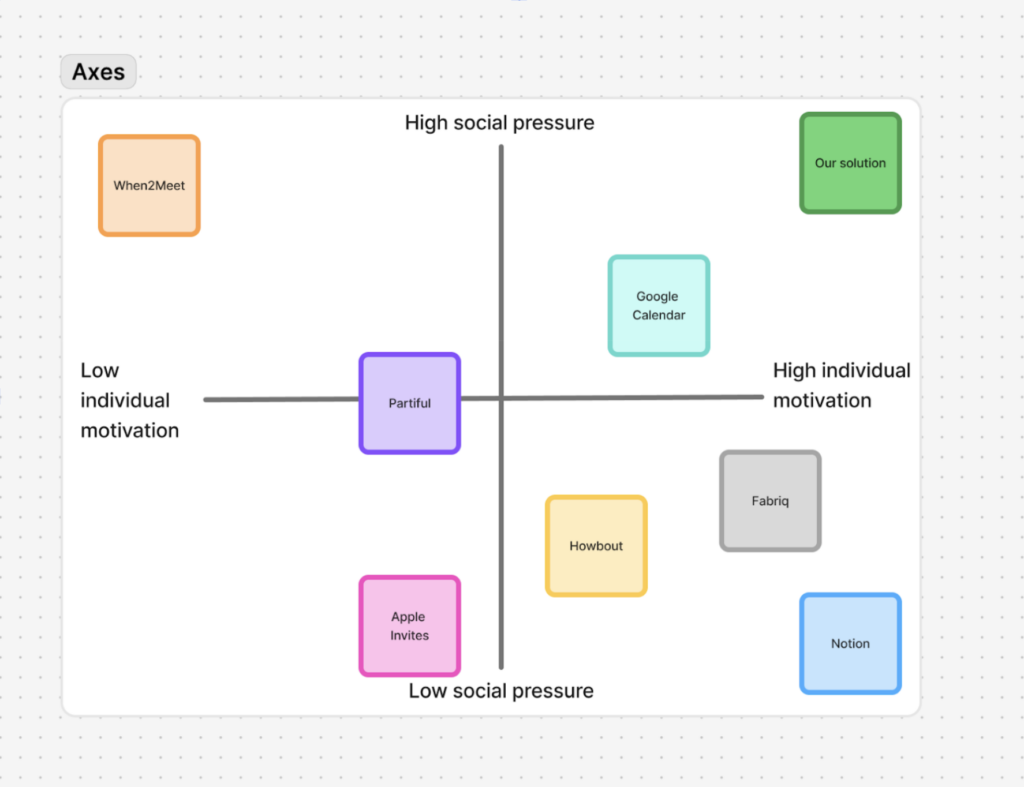

In our comparative analysis, we evaluated eight products across their target audiences, unique features, strengths, and weaknesses: Howbout, Google Calendar, Notion, Partiful, When2Meet, LunaTask, Apple Invites, and Fabriq.

Across products, we saw a consistent split. Personal productivity tools like Google Calendar, Notion, and LunaTask excelled at individual organization and memory, but did little to manage the interpersonal reality of coordination, uncertainty, and social repair. Social planning tools like Partiful, Apple Invites, Howbout, and When2Meet made it easier to create an event container and centralize logistics, but were largely constrained to RSVPs and scheduling. They did not deeply support follow-through, and they rarely addressed the psychology of uncertainty, commitment level, or respectful exit ramps. Fabriq came closest to bridging this gap with its relationship-tracking mechanics, but its nudges did not guarantee action. While it reminded users to reach out, it did not orchestrate plans or ensure follow-through once they did.

Most tools focused strongly on individual motivation features, like tracking, reminders, organization, with little attention to social pressure and shared accountability. Meanwhile, tools that did add visible social features often sacrificed individual planning depth, likely because users wish to keep their personal planning space private while still coordinating socially.

To visualize this, we mapped competitors across two axes: Individual Motivation (the level of personal effort or discipline required to use the product) and Social Pressure (the degree to which others influence a user to follow through on plans). This framing surfaced the core tension our product needed to resolve; users want enough social accountability to encourage commitment, without excessive pressure that discourages participation. No existing product occupied the high individual motivation, high social pressure quadrant, which became the target space for Sticki. Read our full comparative analysis here.

2×2 Competitive Map

2×2 Competitive Map: Individual Motivation vs. Social Pressure

We mapped scheduling products across two axes: Individual Motivation (the level of personal effort or discipline required to use the product) and Social Pressure (the degree to which others influence a user to follow through on plans).

While we initially explored other axes, such as ease of use, adoption friction, novelty, and user delight, we ultimately chose Motivation vs. Social Pressure because they better represent the behavioral dynamics driving popular social planning tools. Many existing solutions either rely heavily on a users’ personal discipline (for example, maintaining a calendar) or on external pressure from others (such as tools that require group responses or coordination). The problem space was exciting because the top right corner, where our future solution sat, was relatively unexplored in the competitive landscape, giving rise to an opportunity for new approaches.

We designed our product to address the tension between these two factors: users often want to be more individually accountable and follow through on plans, but they also want to maintain the appearance of reliability to others. This creates a delicate balance, where an effective solution should provide enough social accountability to encourage commitment, while avoiding excessive pressure that discourages users from participating and requires an unrealistic level of individual motivation.

Baseline Study

Target Audience

Our target population was Stanford undergraduate and graduate students, since this group is socially active, time-constrained, and familiar with the kind of competing priorities that drive flaking. We recruited using a Google Form screener, and deliberately targeted students who had “room to improve” on follow-through, so the study would surface real friction rather than idealized behavior.

Our screening criteria were designed to ensure participants would generate meaningful data across the 5-day diary window. Participants had to be active enough in their social or extracurricular lives to be making or accepting plans at least once a week, and had to have canceled or rescheduled at least one plan in the prior two weeks. These criteria confirmed that they had demonstrated the target behavior, and the recent constraint implied that they flaked with some regularity. Students who rarely initiated or accepted plans were screened out, as they were unlikely to produce enough diary entries to be analytically useful.

We chose these criteria deliberately: recruiting for the behavior rather than just the demographic was important to us. A general student sample would have risked over-representing people with idealized social habits, which would have obscured the friction points we needed to understand. By requiring recent cancellation history as an entry condition, we ensured our participants were people genuinely navigating the tension between wanting to show up and finding reasons not to.

We also collected lightweight background data at the screener stage (response time for invitations and what tools participants currently use to track commitments) to help us contextualize patterns that emerged in the diary entries and distinguish between participants with different planning styles.

Study Overview

Our baseline study focused on “follow-through” in social and extracurricular commitments among busy Stanford students. We wanted to understand why plans that feel genuinely intended in the moment still end up getting forgotten, softened into “maybe,” rescheduled, or canceled close to the start time. The goal was to build a grounded picture of what actually drives flaking (and what makes follow-through easier) so we can design an intervention that helps students keep commitments they want to keep, and cancel responsibly when they should.

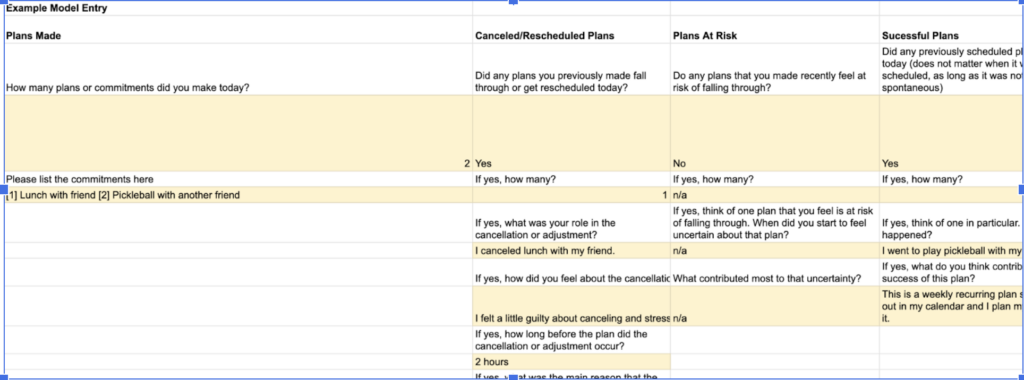

We ran a 5-day diary study over weekdays and weekends, paired with short pre- and post-study interviews. We had 8 participants, one of whom dropped out mid-study, leaving us with 7 complete datasets. The diary captured both quantitative and qualitative data: on the logistical side, participants logged counts of plans per day, whether plans were kept or changed, acceptance time, cancellation timing, distance from event start, and communication channel used. On the qualitative side, entries captured emotions at commitment time, reasons for uncertainty, what changed between committing and the event, and reflections on how the day’s commitments played out. Entries were submitted daily in a structured Google Sheet with a mix of short-answer fields and longer reflections, and participants could optionally attach artifacts like screenshots of messages to provide additional context. To reduce drop-off and keep entries consistent, our team sent daily morning reminders and conducted an evening check-in throughout the study window.

Part of a “model entry” provided to participants as an example

Example of a reminder email

Key research questions

- Where do plans fail? At what point does a plan become “at risk,” and what signals that risk early?

- What drives uncertainty and late-stage changes? What emotional, situational, and social factors push someone from “yes” to “maybe” to “no”?

- What makes commitments feel binding vs. flexible? How does commitment level vary by relationship type, event type, and effort required?

- What norms govern “respectful” cancellation? How do timing, channel, and explanation shape perceived fairness and relational impact?

- What support would actually help? What interventions could reduce forgetfulness and avoidance without making social life feel rigid, guilt-driven, or over-managed?

Synthesis: Baseline Study

Raw Data & Grounded Theory

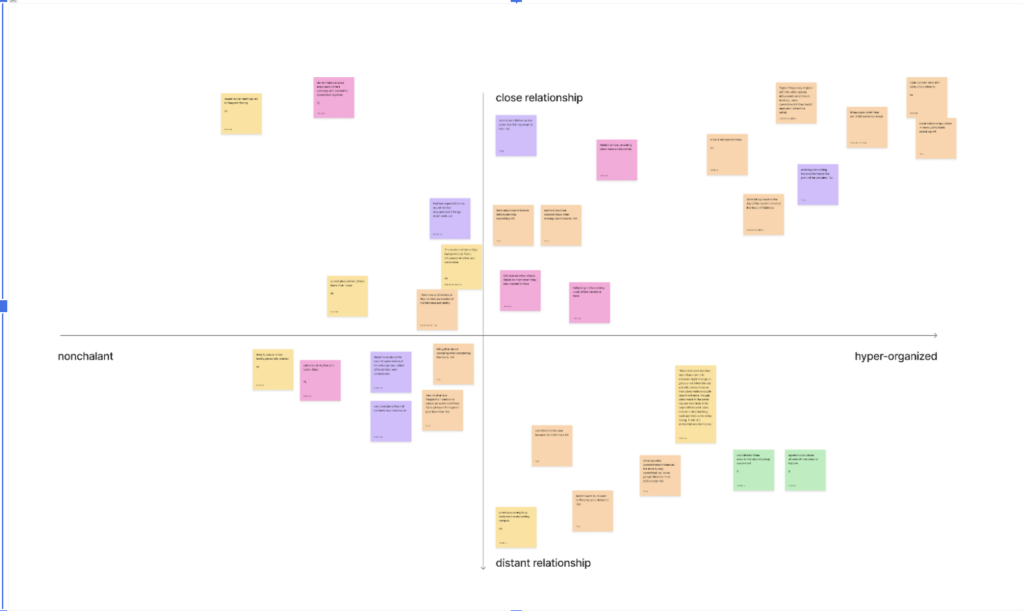

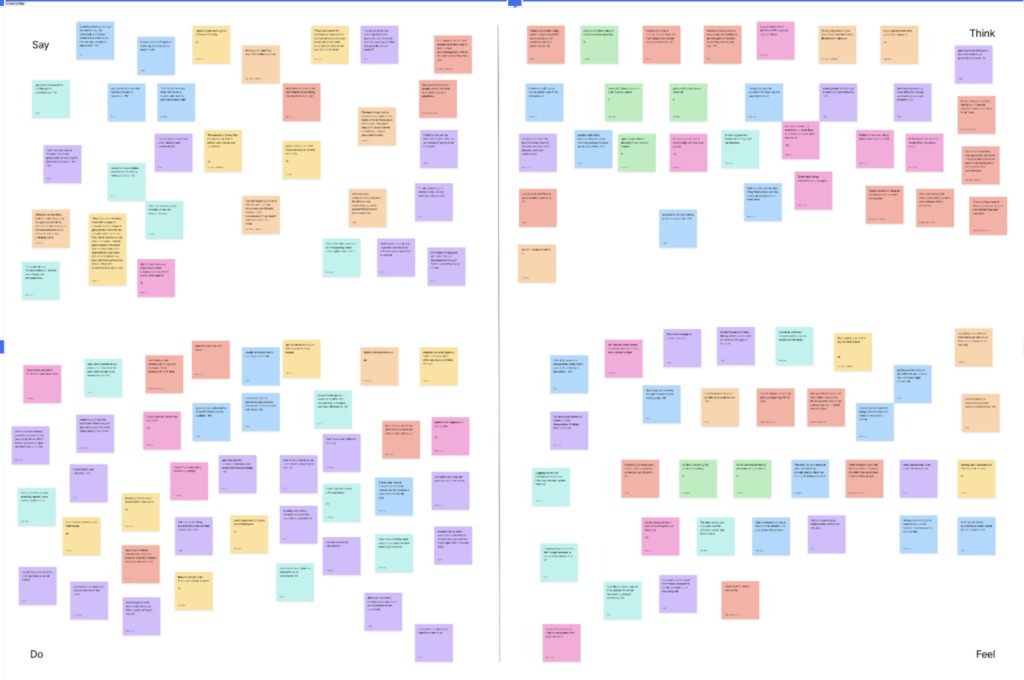

Across pre- and post-interviews and the diary study, we extracted key information onto sticky notes, color-coded by participants. Our full jam board can be viewed here.

Across our grounded theories, a few consistent themes emerged. Follow-through is strongly tied to personal investment, both in the plan and the co-participants involved, and people often feel neutral or even relieved about canceling, particularly when they feel their reason is defensible or the relationship is strong enough to absorb it. Perhaps most meaningful for our design was the finding that people often lack an accurate picture of their own social habits — several participants were genuinely surprised by how frequently they flaked only after completing the diary study.

One of the major insights from our grounded theory is that what makes people commit versus cancel is highly contextual and personal. Throughout our theories we identified several factors that affected flaking, though in many cases the specific effects were different across our participants. In some instances we even identified directly contradictory subtheories. In other cases we identified patterns that only held strongly for 1-2 participants, suggesting that our behavioral personas are an important driver of the behavior we are studying. Overall this suggests that our intervention should be highly adaptive, taking as contextual factors the habits and cues that make each individual likely to commit, follow through, or flake.

See our full grounded theory here: Grounded Theory Document

We organized this information according to various synthesis techniques, including empathy maps, affinity maps, 2x2s and timelines. From these we inductively identified our grounded theories.

2*2 Grid

Timeline

Empathy Map

Affinity Map

System Models

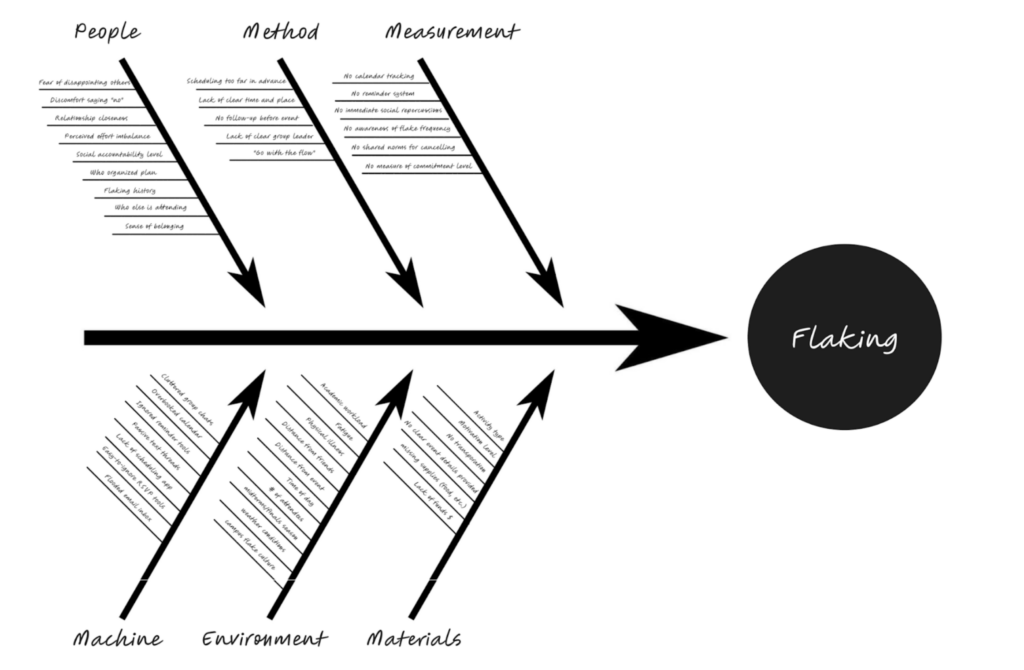

How Grounded Theory findings influenced the creation of the two models below:

- We identified that flaking is multi-factorial

- Effects vary across individuals

- Behavior is contextual and dynamic

- Based on the above insights, we want highlight that different factors – people, method, measurement, machine, environment – can all contribute to flaking; and this is perfectly illustrated using the fishbone model

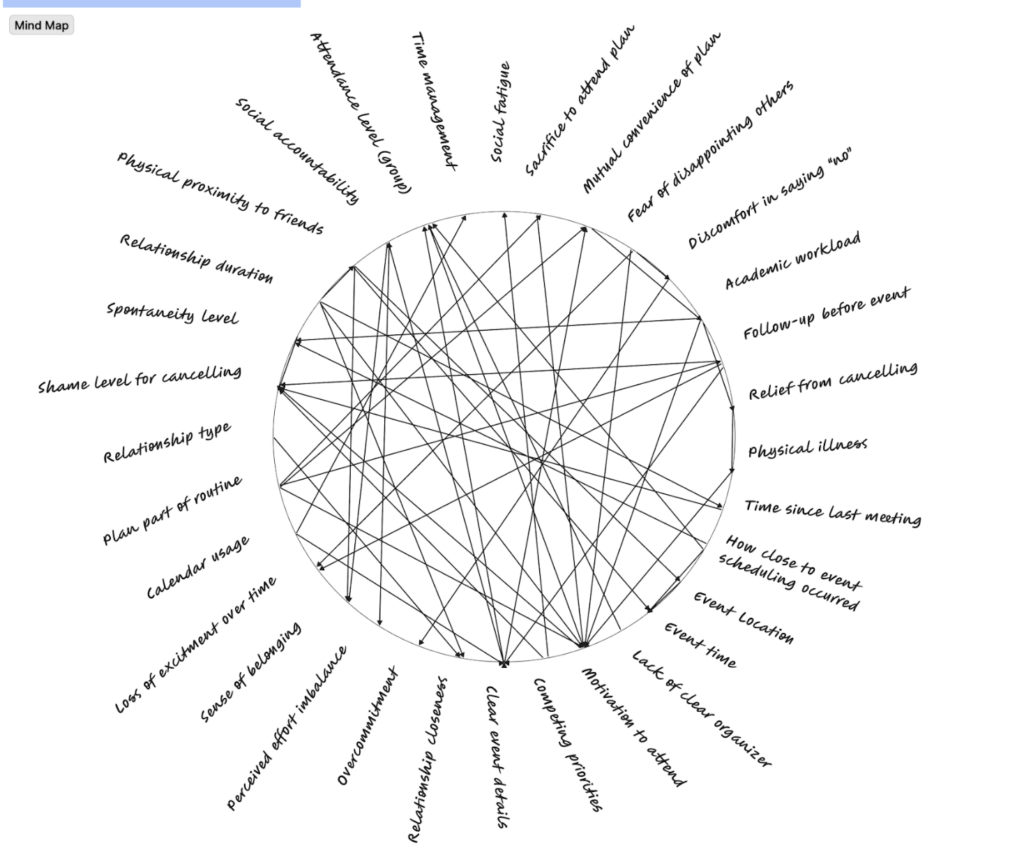

- We also identified that the factors interact with each other, forming cycles of behaviors – and this is captured through the mind map model

Model 1: Fishbone Model

- Insights

- Flaking is multi-causal: the diagram shows that cancellations are rarely caused by a single factor but instead arise from a combination of social, logistical, and contextual factors.

- Both internal and external drivers matter: causes span personal motivations (People), planning structure (Method), and environmental constraints (Environment).

- Planning quality strongly influences commitment: unclear event details, lack of follow-up, or vague plans frequently contribute to cancellations.

- Patterns

- Commitment strength depends on structure: plans that are more structured (clear time/place, organizer, reminders) are less likely to be cancelled.

- Social accountability increases follow-through: when relationships are closer or expectations are explicit, participants are more likely to attend.

- Ambiguity increases flaking: lack of clarity about plans consistently appears as a driver of cancellations.

- Contradictions

- Spontaneity vs planning: some participants prefer spontaneous plans and find rigid scheduling discouraging, while others require structured plans to commit.

- Social pressure vs autonomy: accountability can motivate attendance for some people but create avoidance or stress for others.

- Advanced scheduling vs proximity scheduling: some participants cancel when plans are made too far in advance, while others cancel when plans are made too close to the event.

Model 2: Mind Map Model

- Insights

- Flaking emerges from interacting factors rather than isolated causes.

- Social, psychological, and logistical variables dynamically influence each other.

- Commitment and cancellation are influenced by temporal dynamics, such as time since last meeting or proximity to the event.

- Emotional responses (e.g., guilt, relief, social fatigue) play a large role in attendance decisions.

- Patterns

- Clusters of factors tend to co-occur:

- Workload → social fatigue → increased likelihood of cancellation.

- Strong relationships → accountability → higher follow-through.

- Clear event details → higher motivation → stronger commitment.

- Follow-up communication strengthens commitment by reinforcing social expectations.

- Relational context influences perceived obligation: closer relationships increase both guilt for cancelling and willingness to attend.

- Clusters of factors tend to co-occur:

- Contradictions

- Relief vs guilt from cancelling: participants simultaneously report emotional relief after cancelling but also social discomfort.

- Competing priorities interact unpredictably: workload may cause cancellation for some participants but not for others depending on relationship strength.

- Motivation to attend is unstable: enthusiasm for an event can decay over time or increase due to social reminders.

Proto-Personas

Persona 1: “The People Pleaser”

Quick proto-persona “The People Pleaser”

Lisa, “The People Pleaser,” is a socially active student whose goal is to stay on good terms with everyone and earn their approval by being accommodating and valuable. She strives to maintain belonging and a positive image in relationships. The “People Pleaser” says yes to activities or commitments she doesn’t truly want to attend because she is afraid to say no.

She typically spends time in several social environments, including casual hangout locations (dorms, parties, campus), formal events, FaceTime/Phone, Text Message (Individual or Group Message). To stay in the loop with social events, she uses several platforms, including Messages (iMessage, DMs, Whatsapp, Discord, Slack), Social Media (Instagram, Snapchat, BeReal, Tik Tok), Google or Apple Calendar, and her Notes App. Her key behaviors include: reading social cues, appeasing others, writing sweet/apologetic text messages, managing emotions, smiling or avoiding conflict when stressed, over-apologizing, avoiding saying no directly (e.g. “we’ll see”, “I’ll let you know”, “maybe!”), putting others’ needs over her own, saying “yes” by default, feeling guilty when choosing her own priorities. Lisa trades her time for social approval — ending up burned out, anxious, resentful, and “flaky”. She represents the segment of users who flake on social plans out of overcommitment, double bookings, and making too many social plans to effectively sustain.

Persona 2: “Academic Overcommitter”

[Include persona image/drawing. Describe background, goals, pain points, and key behaviors.]

Marcus, “The Academic Overcommitter”, is a student who is academically driven. His goal is to achieve academic excellence and secure future opportunities (internships, grad school, career prospects) while maintaining some semblance of social connection. His motivations include, preserving academic standing, meeting personal achievement standards, and avoiding falling behind peers. Marcus optimistically commits to social plans during low-stress periods, but cancels when academic pressure mounts—even when he technically has time—because he cannot mentally justify “fun” when work remains incomplete.

He typically spends time in academic spaces (library, lab, study rooms, office hours), dorms/apartments (where studying happens), coffee shops (dual study/social spaces) , text/digital communication (where most cancellations occur), as well as lecture halls and collaborative workspaces. To manage his studies and maintain social plans, he uses Calendar apps (Google Calendar, Outlook, Apple Calendar), Academic management tools (Canvas, Blackboard, Notion, Todoist), Messages (iMessage, GroupMe, Discord), Email, Productivity apps (Forest, Pomodoro timers), and Note-taking apps (Notion, OneNote, GoodNotes). He often engages in time estimation (poor—consistently underestimates workload), academic planning and organization, rationalizing decisions, crafting apologetic but academically-justified cancellation messages, and finding productive study environments. His routines include checking academic deadlines multiple times daily, accepting social invitations when feeling on top of work, re-evaluating commitments as events approach, and studying late into the night before cancelling morning/afternoon plans. He has a habit of saying “yes” to social plans when workload feels manageable (Monday–Wednesday), cancelling plans that fall near deadlines, even self-imposed ones, uses academics as a socially acceptable excuse, even when it’s partially anxiety-driven, feeling phantom guilt about “wasting time” during social activities, overestimating future free time (“I’ll have more time next week”).

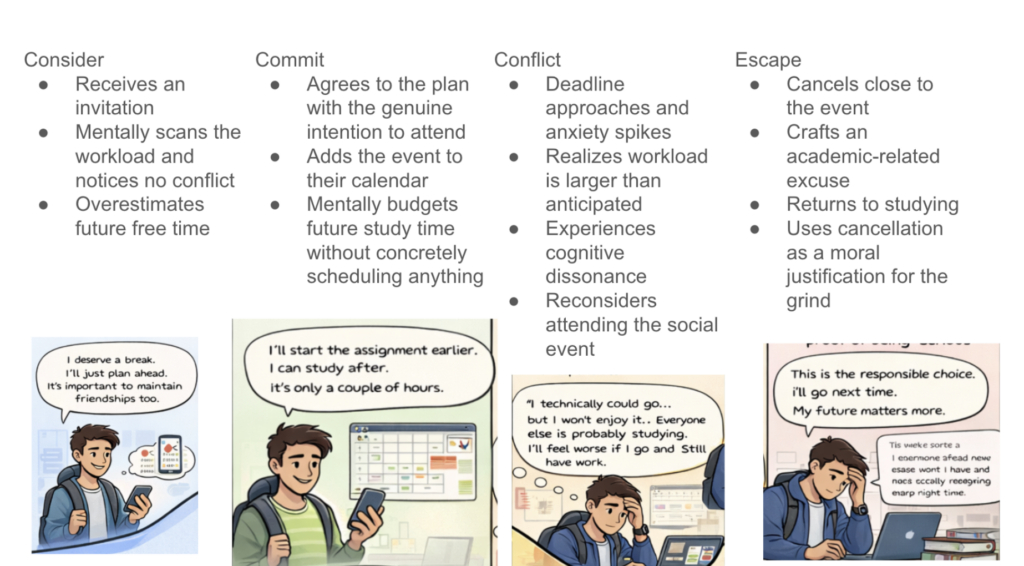

We created the “Academic Overcommitter” persona after observing a recurring pattern: participants consistently accepted social invitations with genuine intent to attend, yet cancelled last-minute when academic demands intensified (e.g. office hour, midterm, etc.). Interviewees described feeling torn between maintaining friendships and pursuing academic excellence, often overestimating their capacity to balance both. They reported saying yes to social plans during calmer periods, only to cancel when assignments, exams, or research deadlines suddenly felt urgent—even when these deadlines were known in advance. We synthesized these behaviors into the “Academic Overcommitter” persona, which represents users whose commitment-making and flaking patterns stem from fluctuating academic anxiety and a persistent underestimation of their workload.

Persona 3: The Chill Guy

The “chill guy” is a socially active student whose primary goal is to appear nonchalant, relaxed, and confident in social situations without seeming desperate, insecure, or overly invested. He is not indifferent; underneath the easygoing exterior, he is actively and carefully managing his social perception. His core motivation is social belonging without social risk: he wants to be seen as someone who is easygoing and cool to be around, because being visibly invested or anxious feels socially dangerous.

His key behaviors reflect this tension. He delays replies to avoid seeming eager, keeps his language deliberately casual, and downplays interest to avoid rejection. This creates an internal paradox: the more he tries to appear chill, the less chill he actually feels. His pain points stem from this gap between internal effort and external presentation: his communication style amplifies ambiguity rather than resolving it, which means plans stay vague, commitments stay soft, and follow-through suffers from a desire to avoid “killing the vibe” with directness. He succeeds at fitting in, but often at the cost of authenticity and clarity.

He represents the segment of users for whom the barrier to follow-through is not poor planning or overcommitment, but a deeply ingrained social performance that makes honest, specific communication feel risky.

Journey Maps

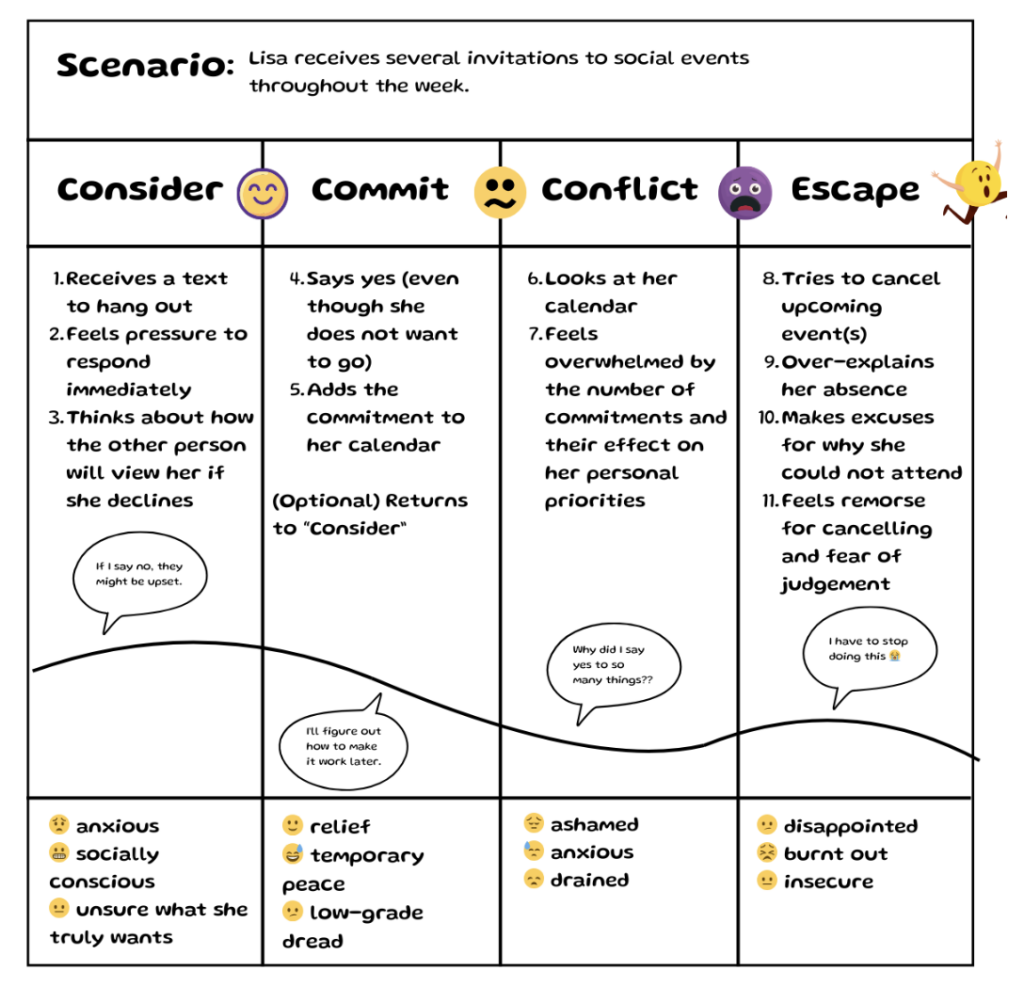

Journey Map — Persona 1: Lisa the “People Pleaser”

This journey map reflects Lisa’s experience balancing multiple social commitments while balancing too many priorities. A typical cycle goes as follows:

Lisa (over)commits to multiple events → Asks herself if she really wants to go → Ignores her true desires and priorities → Ends up stressed, flaky, or resentful

When receiving a new invitation to spend time with friends, instead of feeling excited, Lisa often feels anxious or unsure of her desires due to her previous overcommitment to other social plans. As she plans how to balance this new commitment with others, she feels temporary relief when imagining how she could accommodate everyone. However, this initial peace does not last when Lisa imagines the logistics of attending each plan and how they conflict with her personal priorities—leading her to the emotional low of the diagram, where she faces emotions like shame, anxiety and disappointment when she eventually cancels.

Journey Map — Persona 2: Marcus “The Academic Overcommitter”

Marcus’s journey map reflects his experience balancing his academic goals with an invitation to a social activity during a traditionally low-stress academic window. At the start of the scenario, Marcus is hopeful and motivated to attend the plan. However, as the journey progresses and academic responsibilities add up, he finds himself increasingly anxious and less optimistic about his ability to attend—imagining that he will feel even worse about his academic commitments while attending the social activity. This leads Marcus to cancel and “escape” the commitment. At this stage, he feels relieved but guilty and socially insecure. When the next academic deadline emerges, the cycle repeats, and Marcus finds himself trapped in a cycle of academic anxiety and social withdrawal.

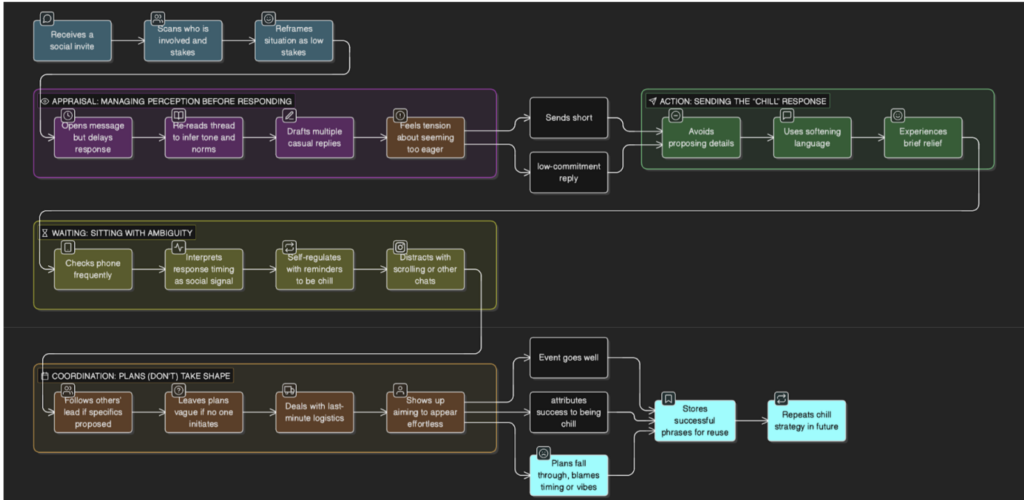

Journey Map — Persona 2: “Just a Chill Guy”

The chill guy’s journey map unpacks his seemingly nonchalant social participation. The map is organized around four key stages: appraisal, action, waiting, and coordination, which each reveal a different layer of the performance that “being chill” actually requires. In the appraisal and action stages, Jordan does significant internal work to project effortlessness. He delays his response, re-reads the thread to infer social norms, and drafts multiple replies before landing on the shortest, most noncommittal one that avoids proposing any details. In the waiting and coordination stages, he checks his phone frequently, interpreting response timing as a social signal. When plans do take shape, he leaves logistics vague until the last minute to avoid proposing specifics.

The key pain point is the paradox at the center of Jordan’s behavior. His strategy for avoiding social risk actively creates the conditions for plans to fall through. The intervention opportunity here may lower the social cost of being specific, or normalize direct communication within his existing messaging context.

Intervention Design

Ideation

The ideation process focused on addressing the core issue of “flakiness” by leveraging research insights regarding social pressure, plan specificity, and impulsive commitment.

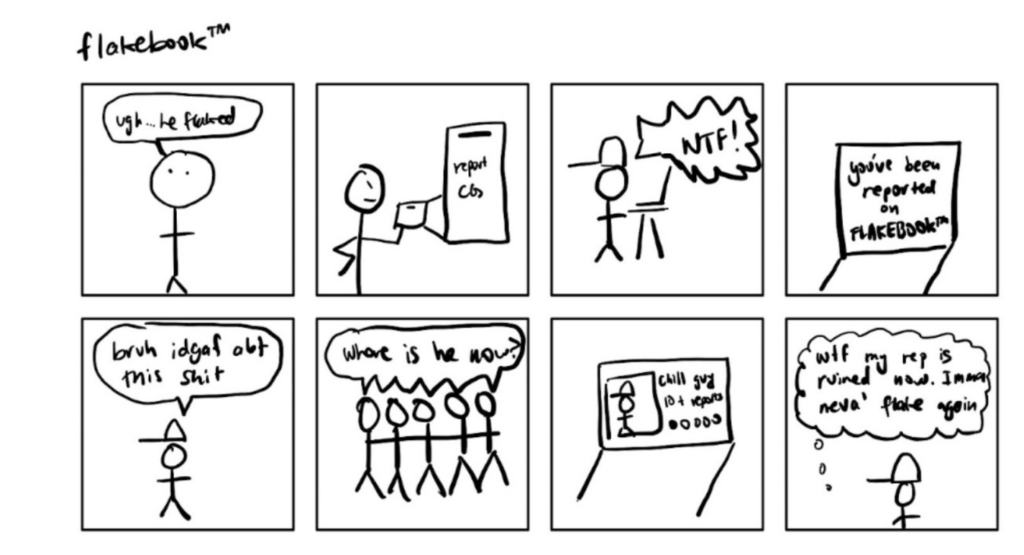

Idea 1: Public Shaming (The “Flakebook” Leaderboard)

This “dark horse” concept leverages social pressure by creating a public leaderboard where users can anonymously nominate “flaky” people. It addresses the insight that people often underestimate their own flakiness and are motivated by how others perceive them.

- Pros: High social pressure; particularly effective for a “chill guy” persona who might otherwise be indifferent to personal scheduling tools.

- Cons: Significant ethical concerns; relies on negative reinforcement (shame) rather than positive motivation; logistically difficult to test without a highly interconnected friend group.

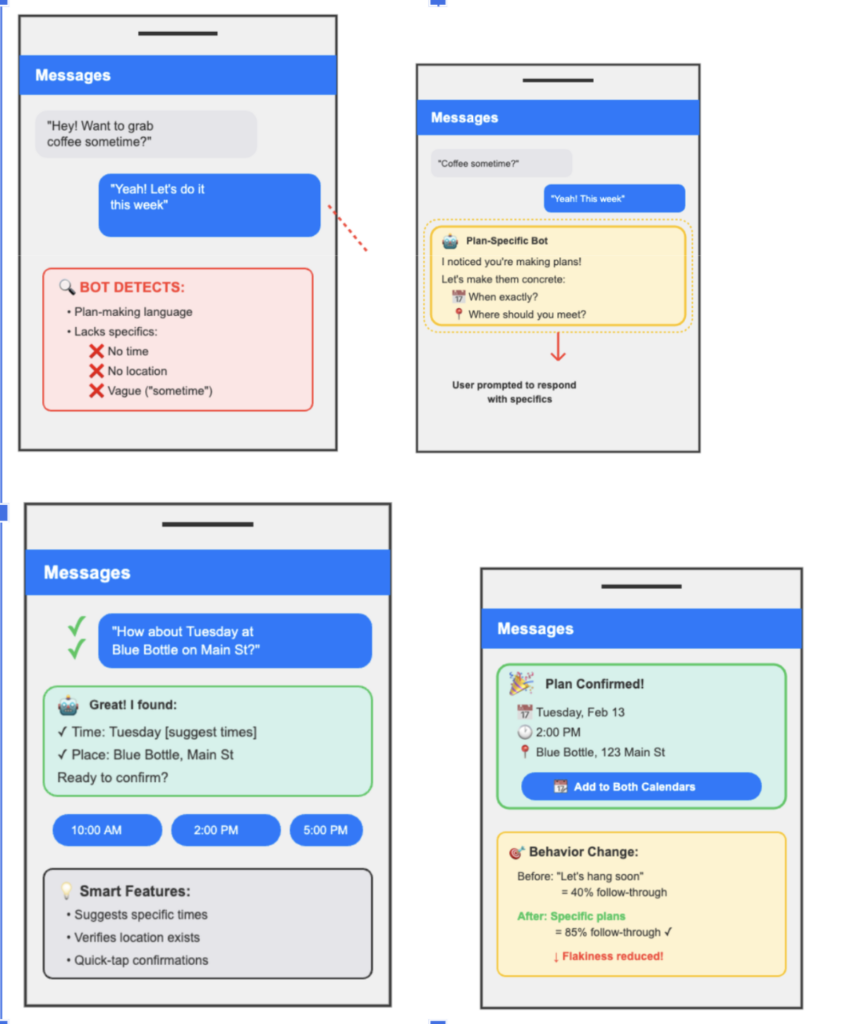

Idea 2: Scheduling Bot

A chatbot that monitors message threads to detect “plan-making language.” When it detects vague plans (e.g., “coffee sometime”), it intervenes to prompt for specific times and locations, then visualizes the confirmed plan for both parties.

- Pros: Significantly reduces scheduling friction; requires little effort from the user; directly addresses the insight that vague plans are less likely to be followed through.

- Cons: High technical barrier for simulation (researchers would need access to private chats); calendar integration is complex; doesn’t address the underlying lack of motivation or social accountability.

Idea 3: Mindfulness Intervention

This intervention targets the moment an invitation is received, requiring participants to complete a short journaling exercise or reflection before committing. It aims to disrupt the habit of saying “yes” out of politeness or instinct to plans the user isn’t actually interested in.

- Pros: Logistically simple to implement; directly addresses the “intention-to-action gap”; helps users understand their own social behaviors and decision-making cycles.

- Cons: Risk of low participant compliance (forgetting to journal); less “creative” than other tech-heavy solutions.

Final Selection Rationale

The team chose a hybrid intervention that simulates a “bot” via text message. This solution was selected because it combines the strengths of all three ideas: it reduces scheduling friction (Scheduling Bot), increases motivation via a “FlakeScore” (Public Shaming/Social Pressure), and encourages meta-awareness through daily reflections (Mindfulness).

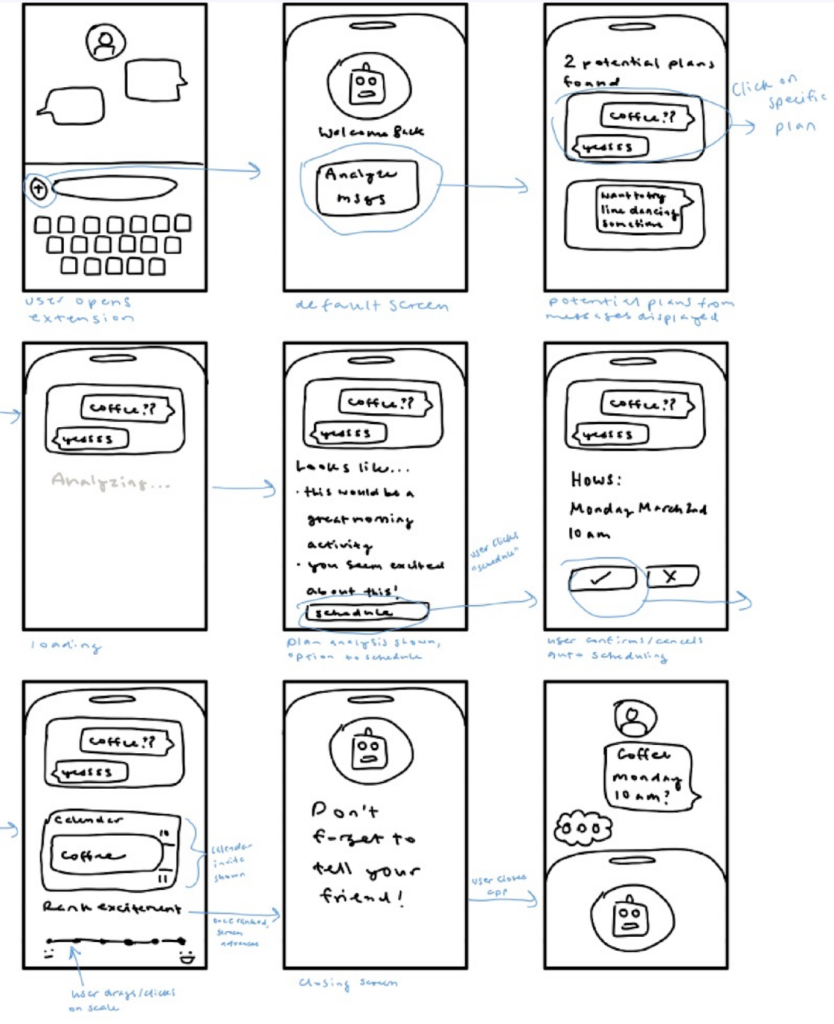

Storyboards — Top 3 Ideas

Storyboard 1: Public Shaming (Flakebook)

From our research, we learned that people often underestimate their own personal flakiness, and that social pressure is an important motivation in getting people to commit to and follow through with plans. Leveraging these insights, one intervention idea is a public leaderboard for flaky people. Others can anonymously nominate people to be added to the board.

We would simulate this in an intervention by testing with a friend group and encouraging people to submit names of those who have flaked on plans. The key hypothesis of this intervention idea is that learning of being perceived as “flaky” by others could motivate participants to change their behavior and increase their follow-through.

Storyboard 2: Scheduling Bot

Intervention description: the time interval between making a plan and the time of the plan and the frequency of confirming plans are both important factors that determine whether people can stick to their plans. To ensure that people are more mindful of when they make plans and can move from ambiguous plans to more specific ones, we propose a chatbot intervention that detects when people make plans.

When a potential plan is detected, the bot will determine whether the plans are specific enough and intervene to make sure that the detailed plans (e.g. time and location) are confirmed. Once the plan is confirmed, the bot will visualize the plan for both people and keep it in the interface to make sure that both are aware of the plan.

Storyboard 3: Mindfulness Intervention

Through our research we learned that people often commit to events and plans that they have little interest in, out of instinct or politeness. This mindfulness intervention is designed to target participants at the time they receive the invitation, encouraging them to think more deeply about the implications of committing or declining.

The aim of the intervention is to prevent participants from committing to plans that they are not interested in, and to help them feel more excited about (and thus, according to our research, stay more committed to) the plans that they are interested in. As an intervention, this would be implemented as participants completing a short journaling exercise whenever they receive invitations, or before they send invitations, and potentially periodically after committing.

Selection Rationale

The final selection is a simulated “bot” intervention delivered via text message that combines elements from all three brainstorming directions to provide a more holistic solution. This hybrid approach was chosen as the strongest solution because it targets the three core drivers of flakiness identified in the research: scheduling friction, low individual motivation, and impulsive commitment habits.

Assumption Map & Assumption Testing

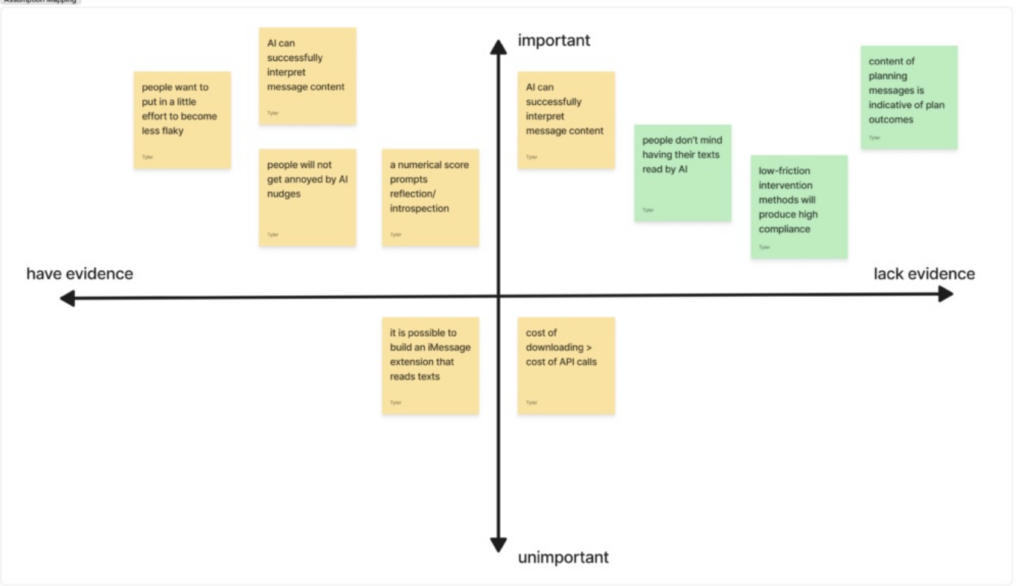

Assumption Map

Assumption Test 1: People don’t mind having their texts read by AI so long as there are significant privacy constraints.

- How tested: Participants are asked to share planning-related text messages with their preferred AI platform, and researchers observe their qualitative reactions and follow-up explanations.

- Insights: While the sources do not provide finalized test results, they highlight that a major “con” of the Scheduling Bot is the logistical difficulty of simulation, specifically the concern that participants might have to add researchers to private group chats. This suggests a high level of sensitivity regarding privacy and group dynamics.

- Impact on solution: To mitigate these concerns while still testing the concept, the team transitioned to a hybrid “simulated bot” intervention where participants send screenshots of planning messages to the “bot” (the researchers) via a separate SMS thread, allowing for a controlled boundary between private chats and the intervention data.

Assumption Test 2: The manual friction of taking screenshots and submitting daily forms is the primary deterrent to consistent plan logging and effective real-time intervention.

- How tested: A comparative test is conducted between a group using the manual “screenshot-to-bot” method and a group using an embedded iMessage app prototype that automatically detects plans; metrics include the percentage of plans logged and the delay between plan-making and bot interaction.

- Insights: Preliminary research identified that reducing friction is a major barrier to intervention success. The manual intervention design currently requires participants to submit screenshots or fill out 20-second forms for every plan, which runs the risk of “slacking” or participants only logging plans at the end of the day.

- Impact on solution: The intervention design emphasizes “quick-tap confirmations” and “show-up scripts” to minimize effort for the user. The goal is to move from a 40% follow-through rate for vague plans to an 85% rate by making the logging process as seamless as possible.

Assumption Test 3: The content of planning-related messages is indicative of whether or not plans fall through.

- How tested: Researchers read planning-related text messages to see if they can discern specific details (location, time, punctuality) and predict the final plan outcome from the texts alone.

- Insights: The intervention is built on the premise that vague plans (e.g., “coffee sometime”) have significantly lower follow-through (40%) than specific plans (85%). The bot is designed to specifically detect when plans lack details like time and location.

- Impact on solution: This insight led to the creation of “Plan Risk labels.” If a participant’s message is identified as “fuzzy” or lacking specifics, the bot labels it as a “Medium” or “High” risk and prompts the user to “lock time + place now” to increase the likelihood of commitment.

Reflection

Several critical assumptions remain even after testing. A primary concern is whether the FlakeScore, a composite score that penalizes no-shows (-8 points) and rewards showing up on time (+4 points), actually motivates long-term behavior change or if it primarily induces social anxiety. Furthermore, the study relies on a very small sample size of 6-10 participants, which may not capture the diverse social habits of different personality types, such as the “Chill Guy” versus the “Over-Committer.”

A potential flaw in these tests is that the “bot” is simulated by researchers, which may cause participants to behave more reliably than they would with a real, automated AI due to the social pressure of knowing a human is reading their logs. To approach this differently in the future, we would implement a fully automated functional prototype to eliminate human-interaction bias and expand testing to include diverse messaging platforms beyond SMS to better reflect real-world usage.

Intervention Study

The goal of our intervention study was to test whether a combination of real-time plan monitoring, dynamic scoring, and behavioral feedback could meaningfully reduce flakiness among socially active students. Specifically, we wanted to understand whether surfacing a person’s own planning patterns, through risk labels and a composite FlakeScore, would increase meta-awareness and shift behavior toward more specific, reliable commitments.

Our key research questions were:

Did commitment and follow-through change over the course of the study?

Were the risk labels provided by the bot accurate to users’ actual behavior?

How did participants emotionally respond to receiving a numerical score tied to their social reliability?

The study ran for five days, with 6 participants — 2 returners from our baseline study and 4 new recruits. Each day, participants submitted screenshots from text message conversations related to planning or executing a social plan. For the first two days we collected screenshots without intervention, using that data to establish baseline scores. From day three onward, participants received daily FlakeScore updates (0–100) based on the quality of their planning communication and the degree to which they followed through on commitments. Good social hygiene increased the score, and flakiness decreased it. We originally planned to provide scheduling suggestions alongside the scores, but quickly realized that participants tended to submit screenshots only after a plan was already fully formulated, making scheduling assistance redundant. We learned that artifact submission affects the behavior being measured, which led us to realize that any effective solution must be embedded rather than external.

Our results were somewhat mixed yet meaningful. The most consistent finding was that meta-awareness alone was a catalyst for change. That is, simply having to report plans made participants more conscious of their behavior.One participant realized how often they actually rescheduled, and another was surprised to find they were making more plans than they previously thought. The power of low-risk labels was an unexpected finding: being told a plan was “low risk” actually motivated follow-through, with one participant noting that flaking on a low-risk plan felt worse because the bot’s framing had normalized the expectation of showing up. However, the score had variable emotional impact. Some participants found it validating and fun, while others experienced it as an attempt to shame them. Several participants noted that the AI-generated suggestions felt too verbose and impersonal to be useful in a real texting context. These findings directly shaped our final design, pushing us toward an embedded iMessage integration, more concise and authentic messaging, greater transparency in how scores are calculated, and a lack of judgement around the score. Further details about our intervention study can be found here.

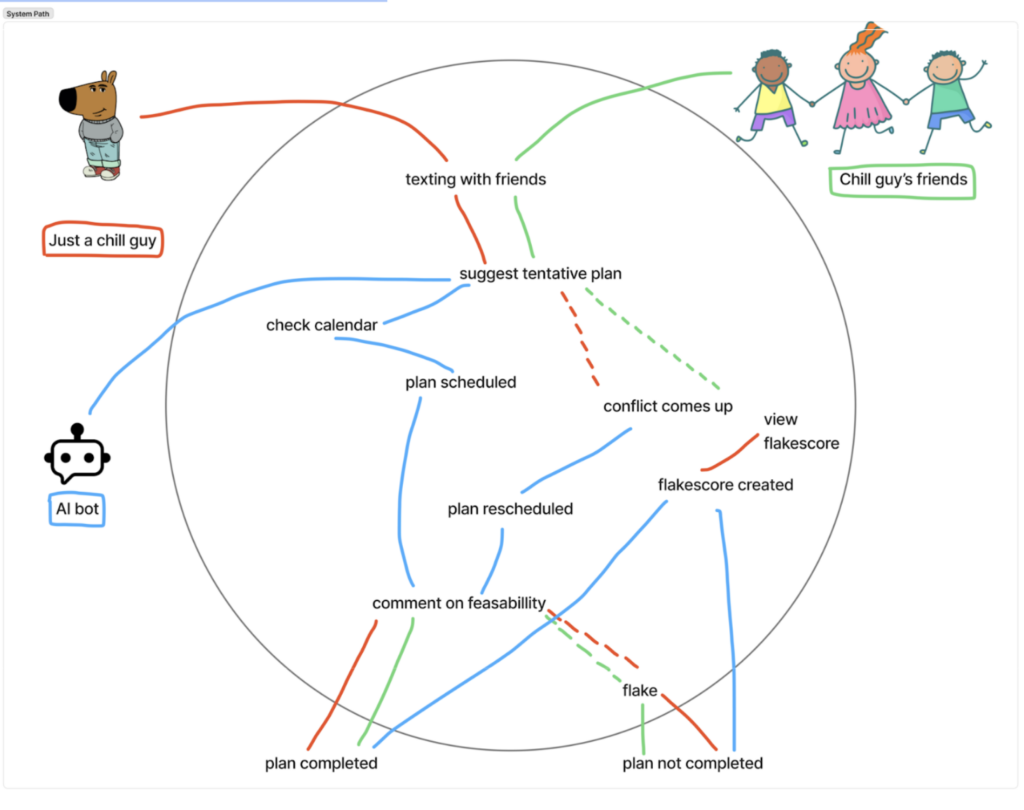

System Paths

The system path follows a participant’s interaction with the simulated “bot” across a typical day.

System Path — Persona: Chill Guy

To create our system path, we identified the key actors: our chosen persona (a “chill guy”), our persona’s friends and co-planners, and our social bot. The path starts with our persona and their friends, as they have discussions over text. The bot automatically joins the path whenever social plans are discussed, facilitating seamless planning. In the planning phase, the bot dominates, managing scheduling and coordination details. Our persona and their friends reenter the path towards the end, turning planning into execution. The path also documents other potential flows with dashed lines, such as when rescheduling is necessary, or when some/all parties flake.

Reflection

The insights from our research, particularly that individuals often underestimate their own flakiness and that vague plans (e.g., “coffee sometime”) have low follow-through, fundamentally shifted our design. Initially, we considered a “Public Shaming” leaderboard, but moved toward a private, data-driven “FlakeScore” to provide the same social pressure and self-awareness without the ethical risks of a public platform. If we were to do this differently, we might integrate automated calendar scanning earlier; our current design relies on manual screenshot submissions, which may introduce friction that contradicts our goal of making the user’s life easier.

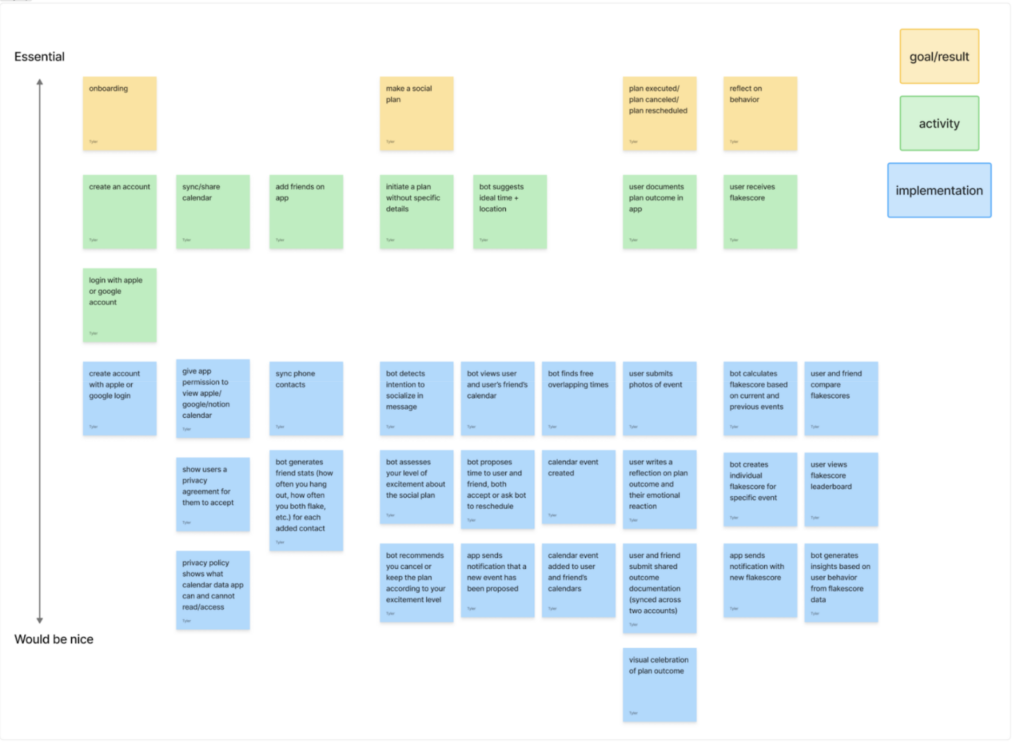

Story Maps

We identified the core goals, actions, and implementation details at each step in the user’s journey. We built the map from the top down, focusing on core needs and processes first and brainstorming dopamine-producing “nice-to-haves” later on. Building out the implementation section top down also gave us more awareness of the technical processes and backend pipelines that were necessary to make the user experience seamless.

Aside from onboarding, our story map included three core goals or outcomes: plan formation, plan execution, and post-event reflection. These three categories provided three core feature areas from which we created our MVPs. More details on the relationship between our story map and MVP features can be found in the following section.

MVP Features

Alpha (must-have):

- Dynamic FlakeScore: A composite score (0-100) that fluctuates based on plan outcomes (e.g., +4 for showing up, -8 for a no-show) to provide immediate accountability and motivation.

- Automated Calendar Integration: Automatically suggesting free blocks from a user’s calendar to reduce the friction of manually checking availability.

Beta (nice-to-have):

- Plan Risk Labeling: A diagnostic tool that flags “Medium” or “High” risk plans when time or location are missing, directly addressing the insight that specificity increases follow-through from 40% to 85%.

- Nightly Reflection Diary: A 1-2 sentence reflection component to increase meta-awareness of social behaviors and disrupt impulsive “polite” commitments.

- “Show-up” Scripts: Pre-written texts provided 2 hours before an event to help users navigate the social anxiety of confirming or arriving late.

Bubble Map

Our bubble map was constructed around the core insights from our interviews. The main reasons that people flake became the three orange bubbles. We then added medium-sized bubbles representing different parts of our solution. Nitty gritty details, which are represented by the smallest circles, are mapped onto the relevant solution. Mapping our intervention visually here allowed us to see which features and solution components mapped onto which needs. The retrospective flake score, for example, which was the core of our intervention study, is driven by flaking behavior, and thus is related to each of the three reasons for flaking. That our bubbles were generally concentrated around the top left orange circle, which centered on plan vagueness, illustrates that most of our solution features address this need.

Interaction Design

Low-Fidelity Prototype

Wireflows

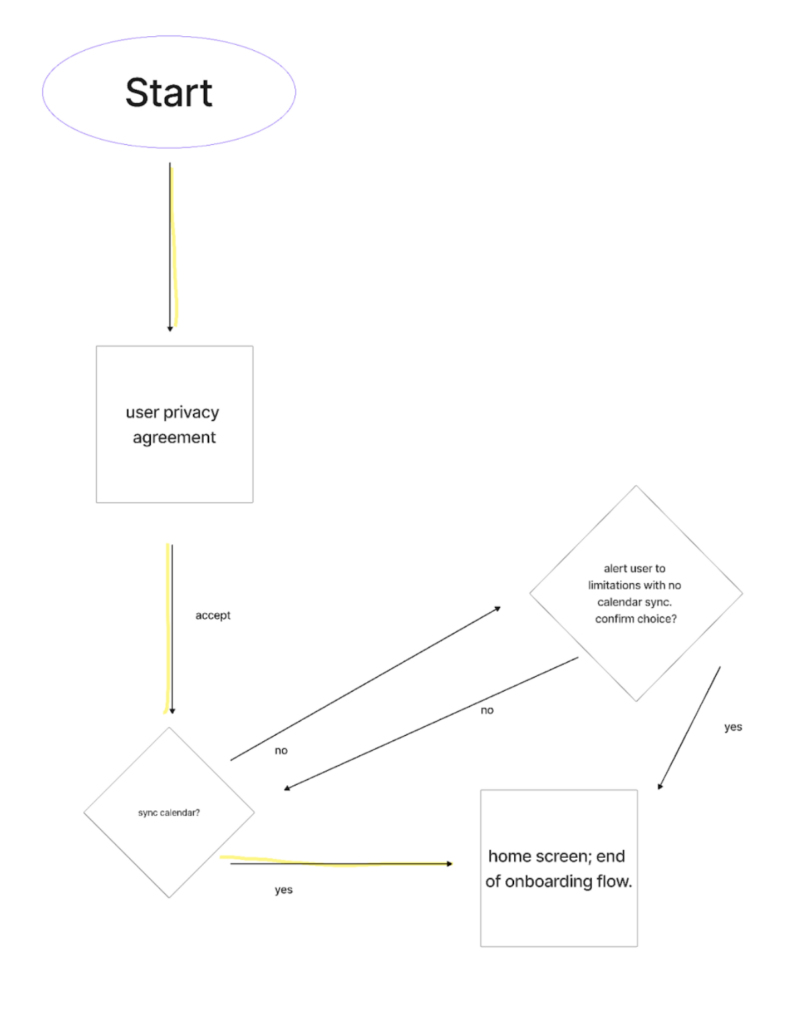

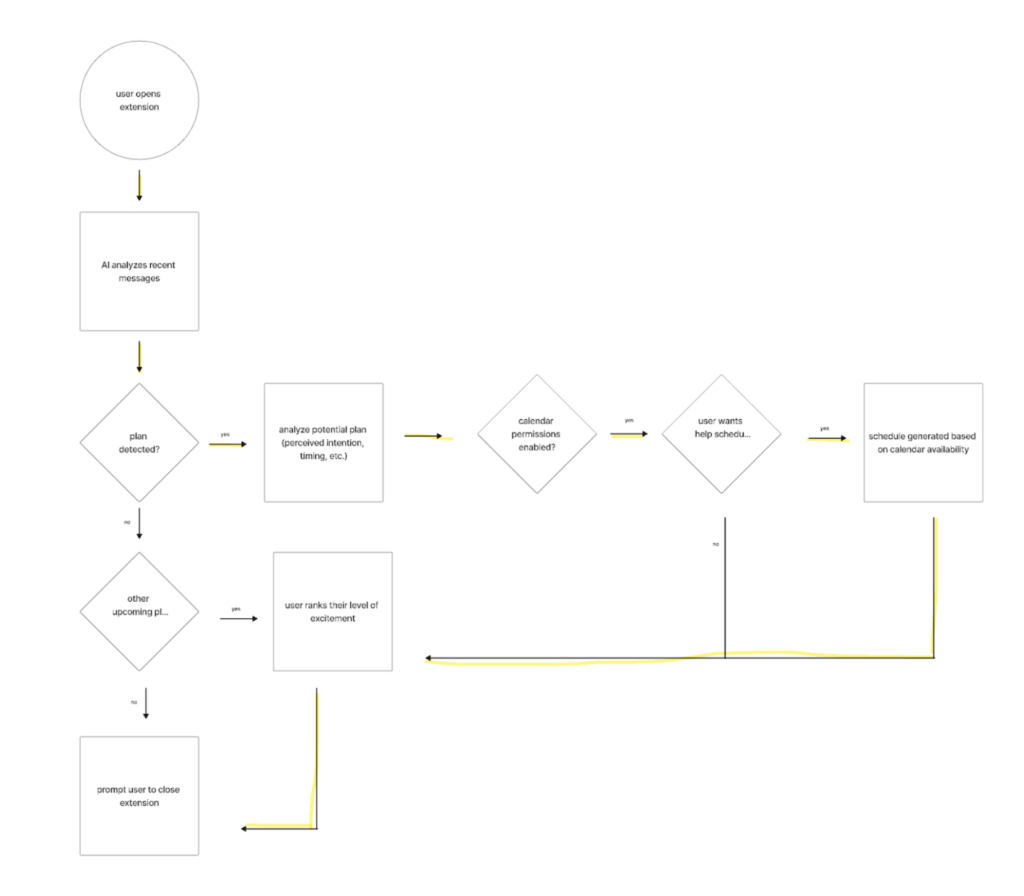

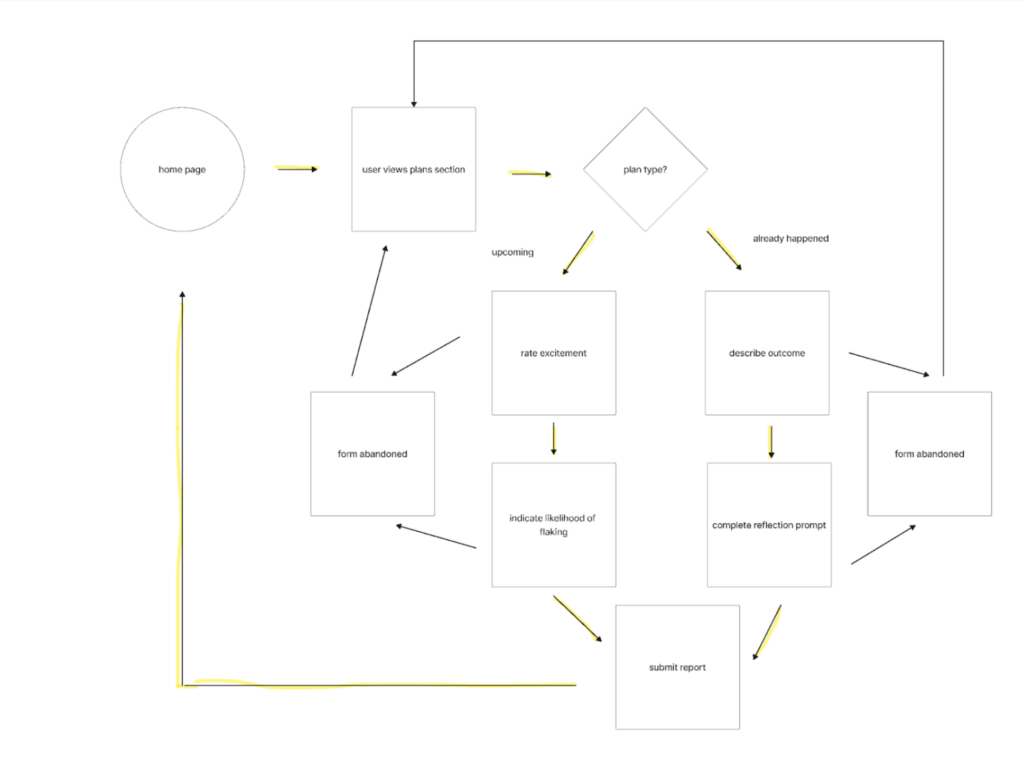

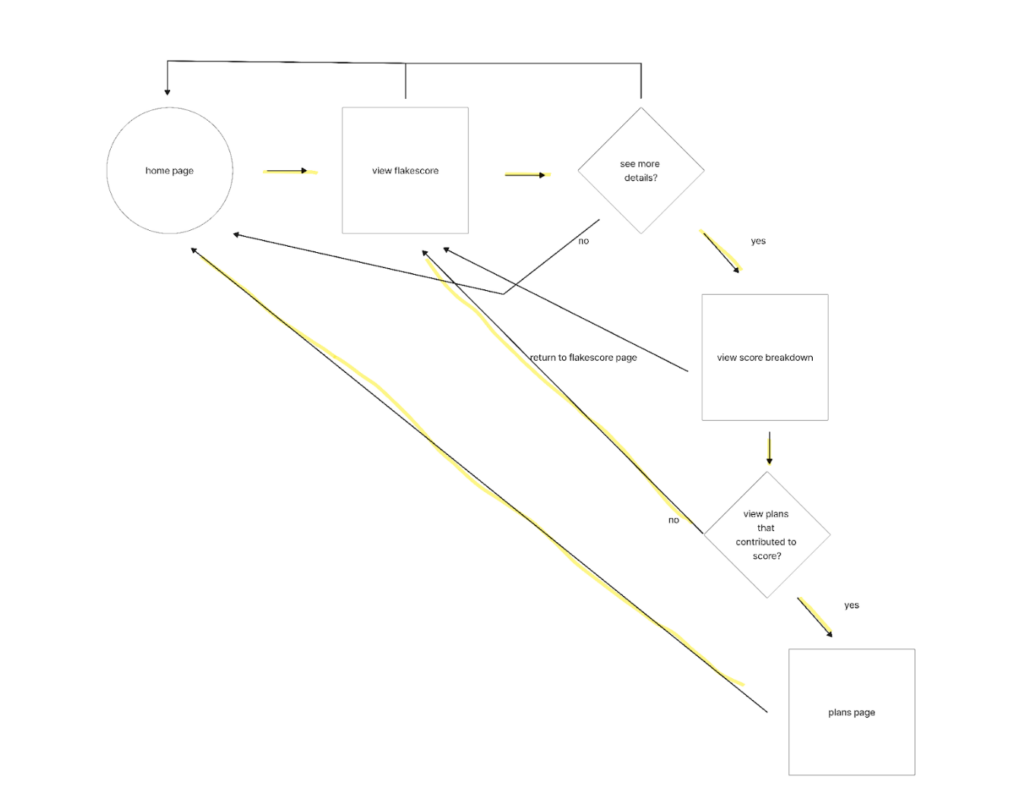

We developed four main wireflows; one for each of our main tasks, and one for onboarding. The happy path(s) in each wireflow are highlighted in yellow and further described below.

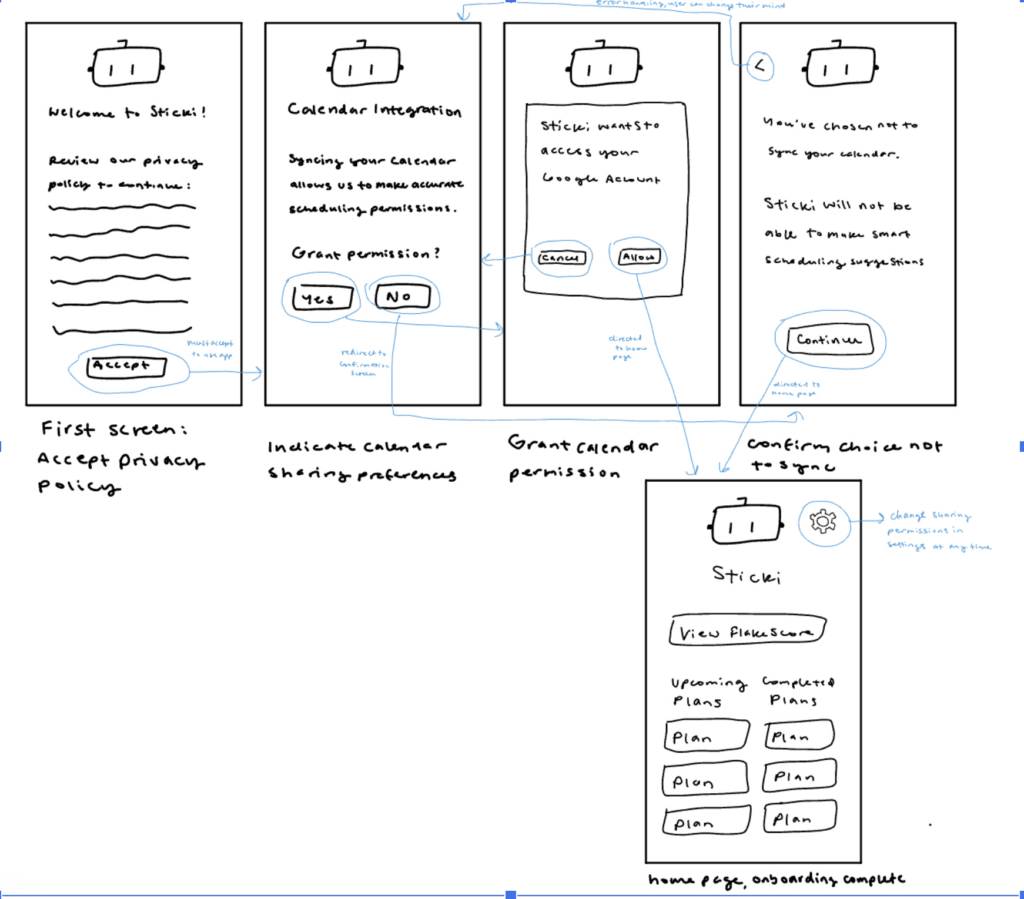

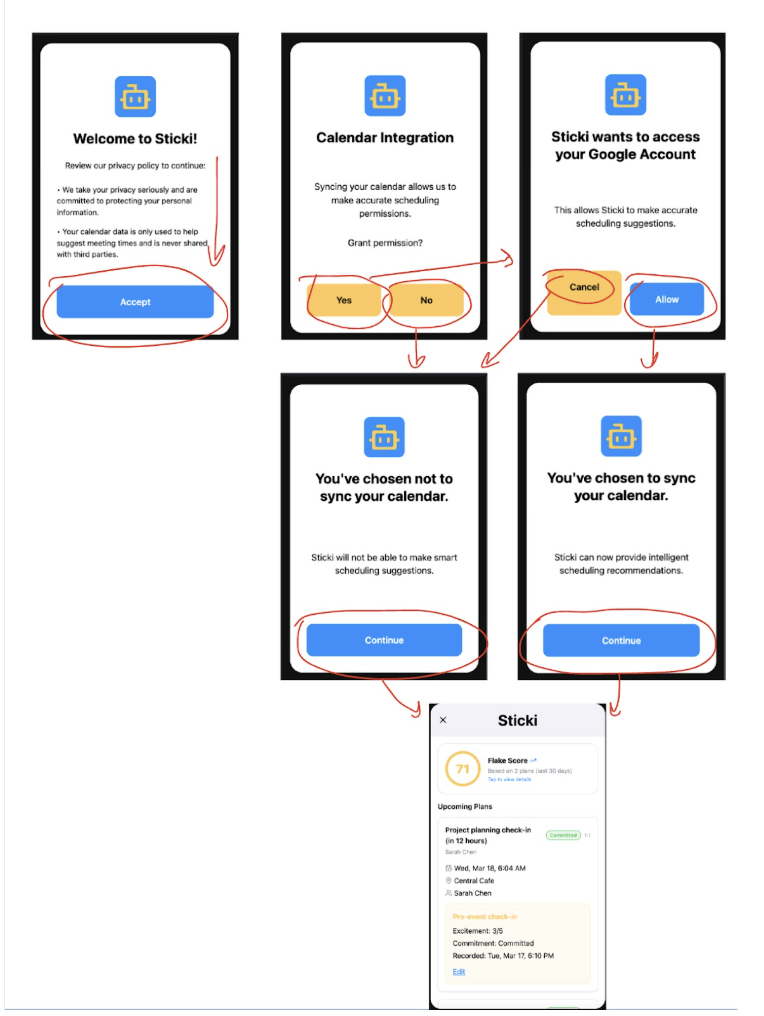

Onboarding

Our onboarding flow presents users with our privacy policy, which they must accept to use the app, and allows them the option to sync our calendar. Since calendar information is important to our scheduling flow, in the happy path the user grants calendar permissions. Although ultimately calendar sync is optional, we included a small nudge to encourage users to share their calendar, since the app functionality is limited without calendar integration. Ultimately the choice is up to the user, and both choices are supported in our wireflow’s decision tree.

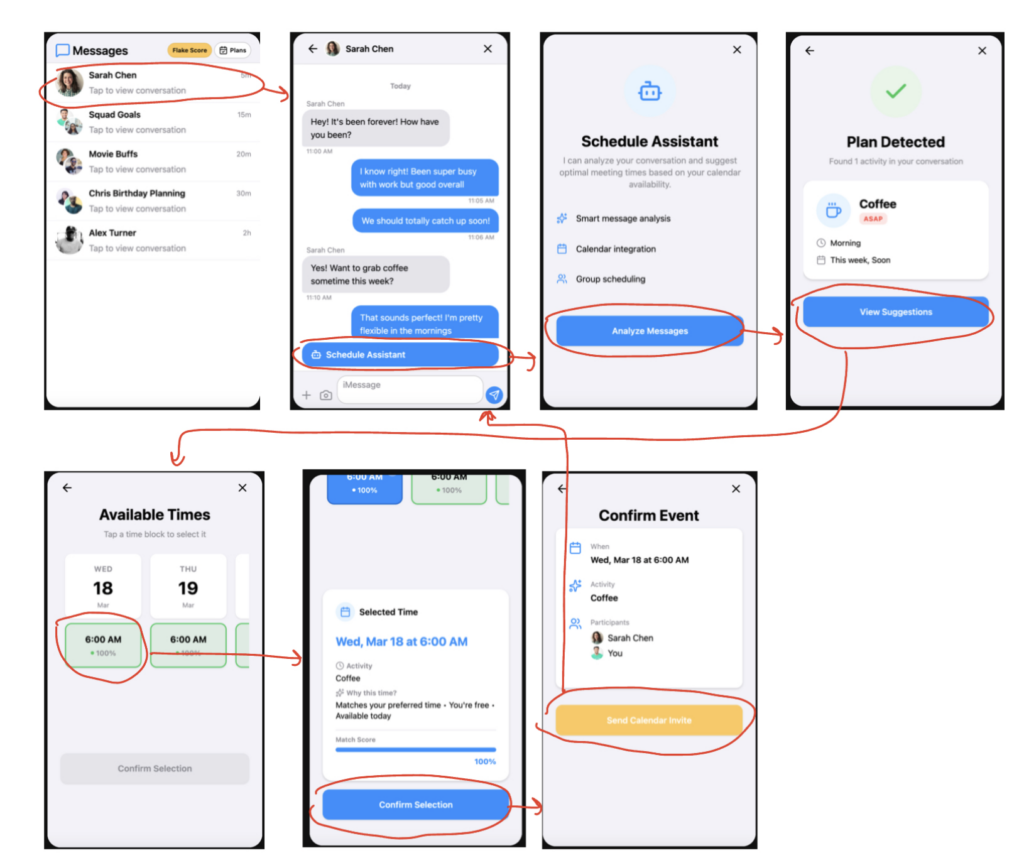

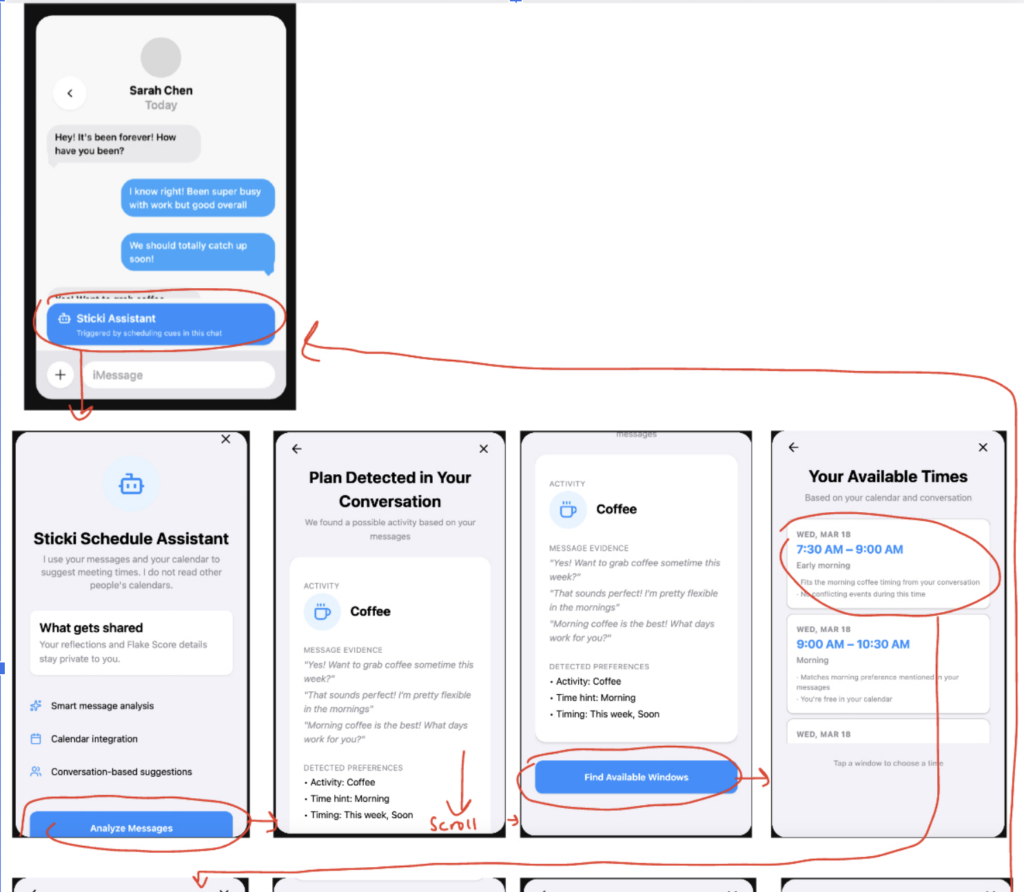

Scheduling Assistant

The scheduling flow allows the app to analyze recent text messages, with key divergence points depending on whether or not plans are detected and whether or not calendar permissions are enabled. The happy path is one in which the bot detects plans and the user has synced their calendar, allowing the bot to propose scheduling options. In this ideal path, the focus is on providing information and choices to the user, with little work required on the user’s part.

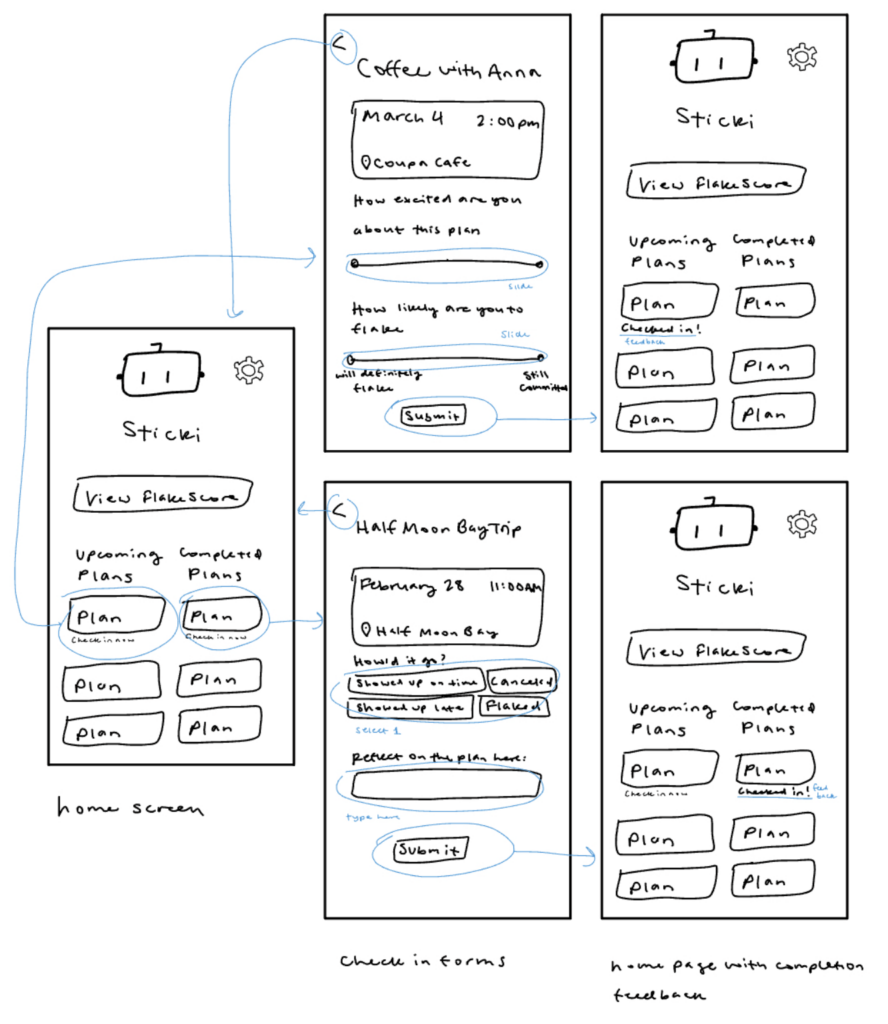

Plan Check-In

In this flow users check in for upcoming or completed plans. The most substantial part of the flow is filling out the check-in report; thus, the happy path is the one in which the user successfully completes and submits the form (whether for pre-event or post-event check-in).

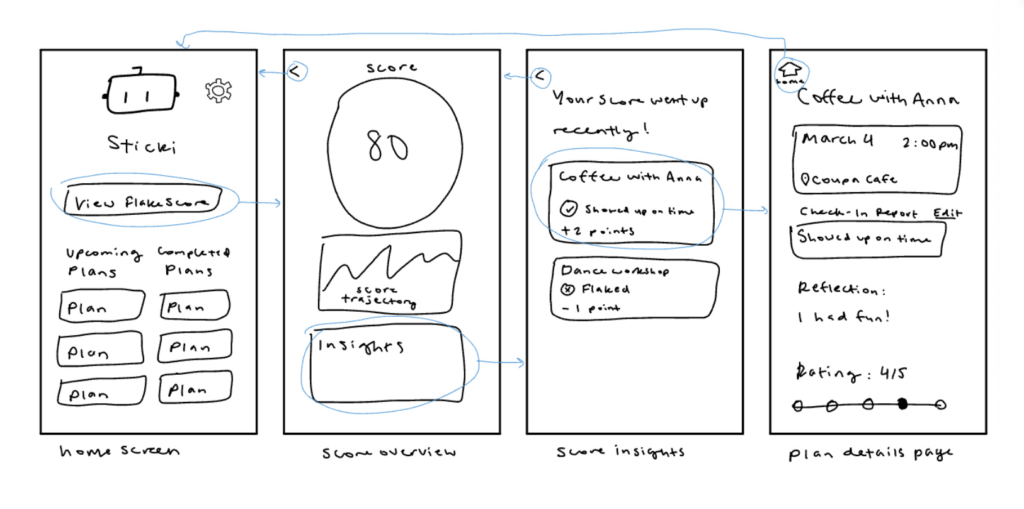

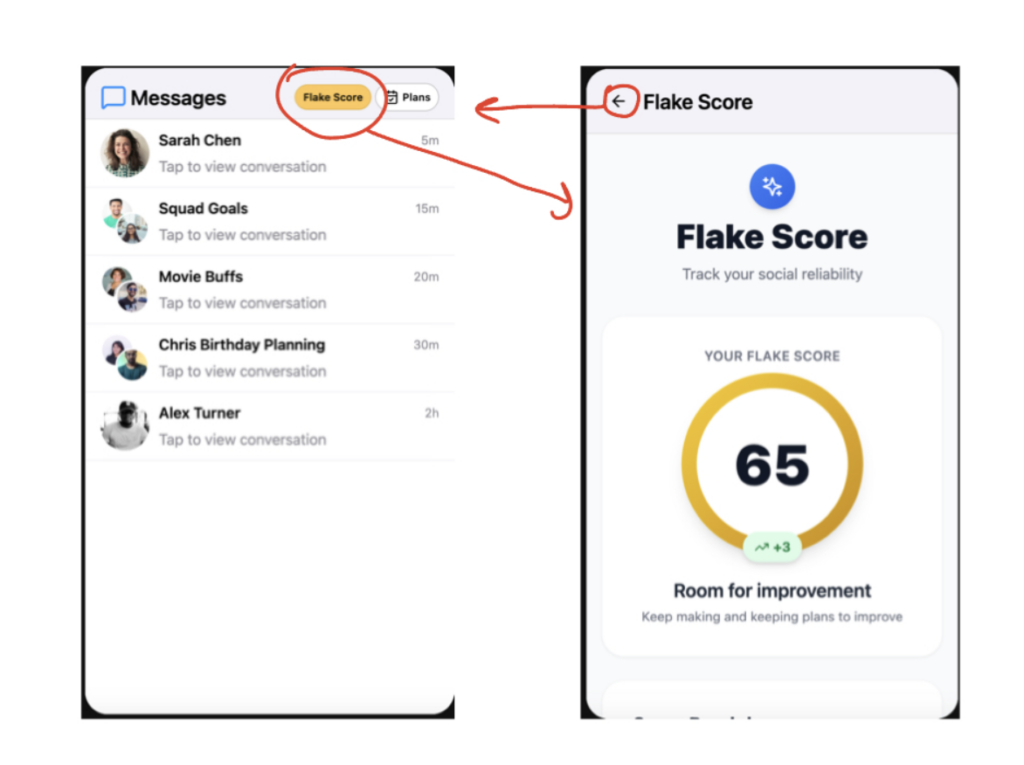

Flakescore

Users can check their Flakscore and view further details in this flow. We identified two happy paths, which represent varying amounts of detail that the user can go into when viewing their flakescore. Similar to the scheduling flow, there is little work required on the user’s end. The ideal outcome of the flow then depends on how much or little information the user wants to access.

Sketchy Screens

Onboarding Flow

The core functionality of the onboarding flow is for the user to review the privacy policy and set their calendar permissions; little onboarding is required since the guiding principle of our design is to make tasks easy for the user. Following feedback, we added a screen (top row, second to the left) informing the user why they are being asked to sync their calendar, as well as a nudge screen (top row, fourth from the left) which gives them the option to change their decision.

Scheduling Flow

The scheduling flow is fairly linear, since the purpose is largely to do automated work and present it to the user for review and confirmation. Following group critique, we added more meta-information, such as screen captions, arrows, and circles, to highlight the user path on top of the UI sketches.

Check-Ins

This flow allows users to complete a simple check in flow from the homescreen. We revised the screens to include prompting when check-ins are appropriate (“check in now”) and success feedback when check-ins have been completed (“checked in!”).

Flakescore

The flakescore flow is focused on depth, allowing users to view more and more details relating to their score. Following feedback we added more back buttons for error handling and user control, and we also added more labeling so it was clear what each graphic and card was supposed to communicate.

Branding

Moodboard

Because our app is focused on making plans stick and fostering healthy social habits, we wanted to capture the mood of a fun social plan in our moodboard. We felt that “playful” encapsulated this sentiment well, and we wanted to make sure that our solution supported users’ confidence, with guiding words like “encouraging,” “inclusive,” and “curious.” We decided to anchor these choices in blue and yellow colors, which feel positive and universal. We chose some abstract images and textures, as well as some that hinged on childish, to capture the whimsicality of our solution. Since our solution was conceptualized around a social “bot,” we included some images of personified objects and robots.

Style Tile

We chose a simple color palette based on the colors represented in the style tile. Our primary blue color matches the iMessage text buttons for seamless messaging integration, and our other primary color, a warm yellow, captures the playful and social spirit of our solution. Our light theme conveys an open and clear style, which is compatible with the warm and inclusive and playful energy from our moodboard. We chose sans-serif fonts for our typography, with font families that look approachable and casual; UI components are round, minimal, and modern, evoking the style of casual social media platforms. High contrast coloring (saturated colored buttons, gray icons, and black text on white background) meets accessibility standards and offers good readability.

Additional branding work, including how we turned our individual mood boards and style tiles into our collective ones, can be found here.

Medium-Fidelity Prototype

Prototype Link

Flows

Walk through the flows you implemented and justify your design decisions. Discuss how the prototype evolved based on your moodboard and style tile.

We implemented three main flows as in the sketchy screens: the scheduling assistant, pre- and post- check-ins (which followed a very similar flow), and the Flakescore. At this stage we added color, and icons, using the blue and yellow color scheme and simple icons from our style tiles.

Scheduling Assistant Flow

From the wireflows to this iteration we added greater detail about each plan, including its compatibility with the user’s calendar and percentages to represent that.

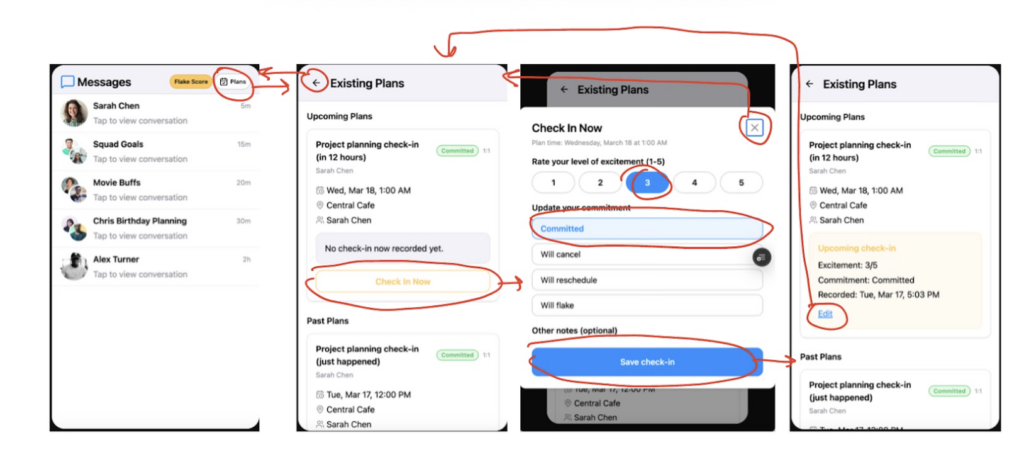

Pre/Post-Plan Check-In Flow

These visuals demonstrate a pre-plan check-in, but the flow is identical to the post-plan check-in. From the wireflows we changed the plans view and created a standard form UI that would feel familiar to users. We added more details to each plan and made the check-ins editable, creating a cyclical design pattern.

View Flakescore Flow

For our medium fi prototype this was the simplest of our flows, with little interactivity. However, our final prototype made the flow more meaningful with additional paths and information.

Usability Testing

Task Design

In our usability testing we observed users completing our four main tasks. We wanted to evaluate how well the app communicated its core functions as well as how effectively it targeted flaking behavior. We collected qualitative data about the users’ process itself, noting hesitation, confusion, and satisfaction. Additionally, we asked questions throughout the process and at the end to get a richer picture of our testers’ experiences.

Our tasks were:

- Schedule a plan with a friend: Users were asked to navigate to a text thread and schedule a plan based on the thread’s contents.

- Complete a pre-plan check-in: Users completed a check-in flow for an upcoming plan.

- Complete a post-plan check-in: Users completed a check-in flow for a recently completed plan.

- Interact with the FlakeScore: Users located and explored the flake score page, learning about their score components and how the score was calculated.

Key Questions:

How was the experience of automatic scheduling?

How did it feel to “check in” with your intentionality and behaviors surrounding a social plan?

How does the app experience differ from how you normally make and reflect on social plans?

Results

We uncovered these three main issues, which we addressed in our final prototype:

Issue 1: Unclear whether data was personal or shared with participants

Relevant task(s): 1, 2, 3, 4

Throughout several tasks, users were unsure whether their information would be shared with others (e.g. reflections in pre- and post-plan check-ins, Flakescore). When they reached these pages in testing, they expressed confusion about with whom the information was being shared, which hindered their ability to fill out interactive sections.

We addressed this by adding clarification messages on each of the relevant screens, including the scheduling assistant page, the check-ins, and the Flakescore page. The message was some variation of “your check-ins and reflections are visible only to you.” We decided to make these forms and datapoints private to preserve user privacy and encourage users to be honest; in usability testing some testers mentioned that they would feel an incentive to lie if they knew their responses were being shared.

Issue 2: Flakescore Generation was Opaque

Relevant task(s): 4

Participants commented on several aspects of the Flake Score breakdown section, namely punctuality and specificity. They wondered how the platform had determined whether they had checked-in to plans on time and what factors went into the specificity of their responses while making plans. This made it hard for them to interpret their score and the significance of its changes over time.

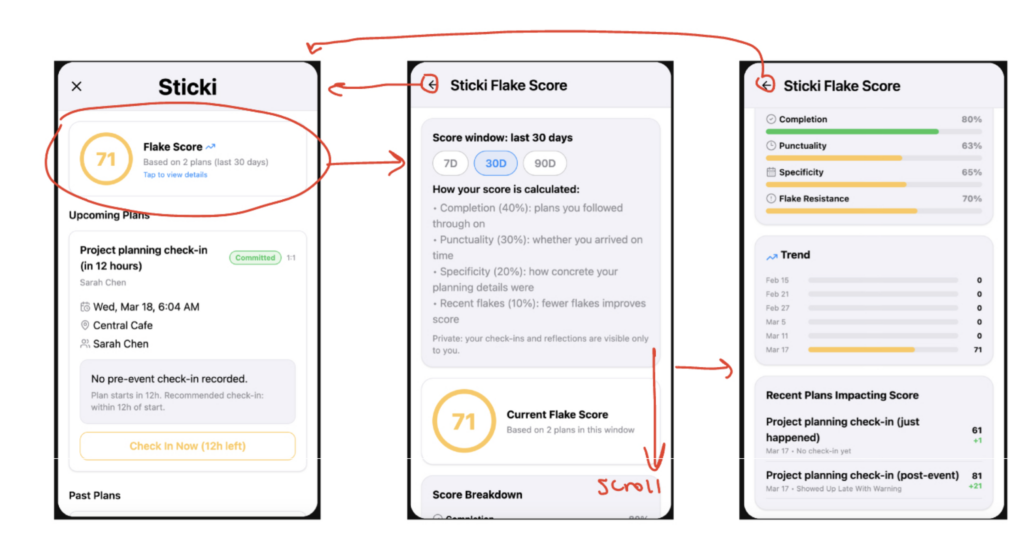

In response, we added more connections between the Flakescore and the plan outcomes that influenced it. We showed a list of recent plans which had an impact on the score, and whether they increased or decreased it. We also reworked the score trend card to show more detail. One participant additionally commented that it would be helpful to have a clearer time range for the FlakeScore to reveal how relevant it was to their recent activity (e.g., weekly score vs. monthly score). To address this we added score window toggles, so that users could view their score from the past 7, 30, or 90 days of activity. This also contributed to our overall goal of showing how the score was calculated by making the timeframe explicit.

Issue 3: Source of suggestions in automatic scheduling flow were unclear

Relevant task(s): 1

Lack of visibility of system status in automatic scheduling flow.

The automatic scheduling flow did not provide information on how the suggestions were created, leading users to wonder whether the suggestions came from the text message content, the user’s calendar, or a combination of both.

To clarify this, we added a more comprehensive breakdown of suggested scheduling, including information about how the proposed times came from their calendar (eg. “You’re free at this time on your calendar”) and the texts (“You indicated that you would both prefer morning times”).

We also realized that some of the confusion came from the lack of an onboarding step showing the user syncing their calendar, so we added this explicitly.

Reflection

Our usability testing showed us that several key pieces of information that we had taken for granted in our design (e.g. that reflections were private) were not clearly communicated to users. If we had more time, we would have loved to do another round of testing with an updated prototype to see if further surfaced. Additionally, we might have liked to try an A/B testing model to compare user preferences across two conditions (e.g. information shared vs information private). Due to time constraints we made the choice ourselves based on insights from previous interviews and ethical considerations.

Final Prototype

Hi-Fidelity Prototype Link

Clickable Prototype Final Draft

Fidelity

Our clickable prototype is a high fidelity mockup of an iMessage extension, simulated with some built-in dummy data. To emulate a real iMessage extension, we simulated the real flow of accessing the app from the text conversation screen. Though the exact fonts on our style tile were not accessible for our prototype, we used consistent sans-serif typography to bring out the approachability of our design. We used consistent colors from our style tile: blue (#2998FB) and yellow accents (#FFCC4D), with black text on light gray and white backgrounds for accessibility. Our visual design is clean and minimal throughout, using the simple icons and buttons from our style tile, with our Sticki bot logo interspersed for a light touch of playfulness from our moodboard.

Usability

Task Flows Overview

Onboarding

Happy path: Welcome → calendar integration → user selects “yes” → Sticki wants to access your calendar → user selects “Allow” → “You’ve chosen to sync” page → user selects “continue” → homepage

The onboarding page simulates granting calendar access, since we have not implemented that. Subsequent flows use hardcoded calendar data. Although granting permissions is the happy flow, the app also supports not syncing the user’s calendar.

Scheduling Assistant

Happy path: Start on messages page → open message analyzer → find available windows → select available window → select specific time → confirm selection → send calendar invite → done (return to messages)

Currently the text message content is hardcoded, so that there is data for the message analyzer to work on. The analyzer uses NLP techniques to detect potential plans within the message content. The calendar time windows are also hardcoded since we currently do not support genuine calendar integration.

Pre/Post-Plan Check-In

Happy path: Start on homescreen → complete check-in → automatically redirected to homescreen

View Flakescore

Happy path: Start on homescreen → click on Flakescore card → view Flakescore page → return to homescreen

The plans contributing to the score are hardcoded, but the score itself is dynamically generated.

Hi-Fi Flows

Walk through the flows you implemented using screenshots/visuals. Explain the rationale behind each design decision and how you incorporated usability testing insights.

Flow 0: Onboarding

This onboarding flow is the default when users first use the app, though in subsequent uses they are directed to the homepage. In the onboarding flow the user reads and agrees to the privacy policy, which is required for usage of the app. They then have the option to sync their calendar or not (if they choose to sync there is a step simulating granting access). Users are then provided a summary of their choice for reference. The end of the flow directs users to the Sticki homepage.

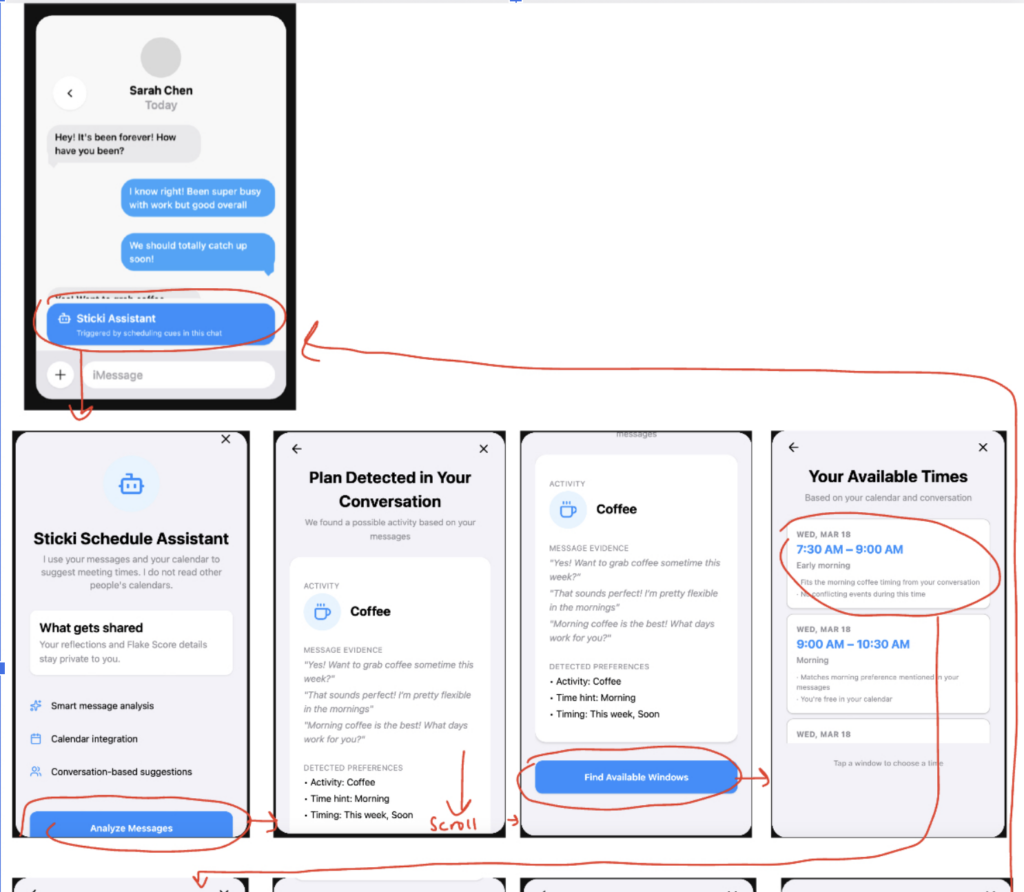

Flow 1: Scheduling Flow

The user first clicks on the scheduling assistant to open it, and then they click through a series of steps in which the bot analyzes the conversation and finds appropriate scheduling windows. Each step includes more detail about how the timing was generated, based on feedback from usability testing. The generated calendar event is also editable based on user preferences. At the end of the flow, the user is redirected back to the messaging screen. These plans are then added to the user’s account and are displayed on the homescreen, enabling integration with flow 2.

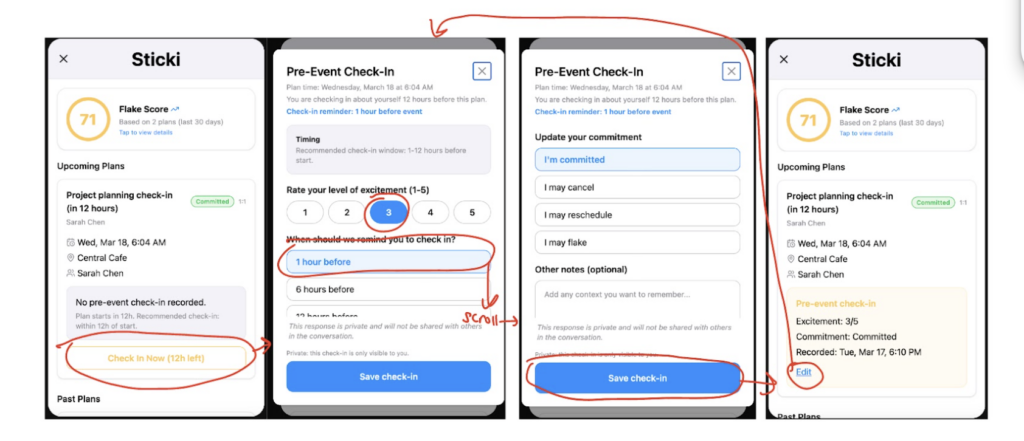

Flow 2: Pre/Post-Plan Check-In

In this flow the user can complete the check in directly from the homepage. Clicking the check in button causes the check-in form modal to pop up. The user completes their selection and saves, which redirects them to the homescreen. They have the option to edit their response at any time, which reopens the modal. From the med-fi version, we added more information explaining that responses are not shared.

Flow 3: View Flakescore

The user can view the Flakescore directly on the app homepage. If they wish, they can also click into the score to view more details. According to testing feedback the score page now includes different time windows that the user can switch between. They can also scroll down to see which plans have affected their score recently.

Final Product Space

The problem Sticki addresses sits at an underserved intersection: the space between making a social plan and keeping one. Existing tools either focus on individual productivity or event logistics, but none target the psychological and behavioral dynamics that determine whether plans actually happen after they’re proposed. Our competitive analysis confirmed that most scheduling tools treat commitment as a binary outcome, using RSVPs as a metric, and have no mechanics for the uncertain, fluctuating middle ground where most flaking occurs.

Our research provides insight into why this gap exists. Through our diary study, bookended by interviews with Stanford students, we found that flaking is multi-causal and highly contextual. Data analysis revealed that plan vagueness, competing priorities, emotional ambivalence at commitment time, and lack of follow-up communication all interact to produce cancellations. Literature on implementation intentions research backs up one of our clearest empirical findings: vague plans have dramatically lower follow-through than specific ones, because specificity transforms an intention into an automatic behavioral trigger.

Sticki is uniquely positioned to address this because it intervenes at multiple points in the commitment process — not just when plans are made, but in the days leading up to them and in reflection afterward. By living inside iMessage, where plan-making conversations already happen, it removes the context-switching friction that caused participants in our study to abandon other tools. The check-in system directly operationalizes intentional reflection before or after a plan, meaningfully shifting how seriously users take their commitments. The FlakeScore’s private framing addresses a key tension our personas surfaced: users want accountability, but public pressure or shame-based mechanics create avoidance rather than motivation. Together, these features fill a key gap in the social planning space, promoting well being through healthy social behaviors.

Conclusion

Ethics

Problem

Our prototype addresses the problem of scheduling friction by taking the user’s schedule and finding compatible times to make plans; additionally, our prototype promotes reflection by synthesizing users’ self reported behavior into an evaluative numerical score. Our scheduling component has a data privacy dimension, while scoring raises ethical implications.

Impact

Our solution promotes healthy and meaningful social relationships by bolstering the time and experiences within which those relationships are formed and maintained. In a world where our lives increasingly unfold online and automated technical solutions reduce the need for human interaction, our solution is focused on bringing people back together. More specifically, Sticki removes the barriers to socializing by making scheduling easy and purposeful. Our approach is highly personalized, offering custom scheduling and behavioral scoring for each user. To do so, we collect a high volume of user data. The ethics of this data collection relies on a fair and transparent contract with the user about its privacy and usage.

Ethical Reflection

In our team discussions, we weighed the importance of a personalized, seamless, and behaviorally effective user experience against the ethical considerations of privacy and evaluative nudges. Ultimately, personalization is fundamentally necessary to our design. We realize and acknowledge that our solution may not be the right fit for people boycotting AI or preserving a high level of digital privacy. However, through our assumption testing, we learned that many people already freely share their personal information with LLMs and other digital platforms and in many cases feel very comfortable doing so. Discomfort came from the unexpected — for example, seeing a friend’s name appear in an AI generated response after uploading a screenshot with that information. We address this concern by clearly showing what information our platform has access to, how we use it, and how we do not use it. With a high degree of data protection and lack of data monetization, we feel confident that we can preserve our users’ privacy and mitigate harms.

We also considered the ethics of a “Flakescore,” and the implications of scoring users’ behavior. While we wanted to pursue a social solution to leverage social pressure for commitment, we ultimately decided to keep our solution personal. Having a personal score already invites a reaction and deeper reflection, whereas a score visible to friends or the broader public invites feedback, which could escalate into bullying or harassment. Anticipated harms meaningfully informed our process, leading to our final prototype.

Reflection

Takeaways

We learned a lot about flaking from this process. Unlike some habits, flaking is highly variable. The same person can exhibit a variety of different flaking and commitment behaviors, depending on factors like time of day, week, and quarter, duration, cost, and the behavior of others. At the same time, the same situation can produce different decision making processes for different people; the acceptability of certain behaviors, like flaking on a close friend, depend on that unique situation and relationship. Motivation really does matter, and how excited someone is about a social plan is directly related to how much effort they put into scheduling it and showing up day-of.

In our research process we saw the power of storytelling and narrative data in action. Flaking is intrinsically linked to emotion. It can provoke feelings of guilt and shame, while the opposite behavior or commitment can create feelings of pride and satisfaction. The most powerful insights came from these emotion driven stories. When participants told us how they felt when they did what they did, we had a better sense of their needs.

In our brainstorming process, we learned that dark horse ideas are powerful; what originally started as a public shaming forum for flaking eventually turned into the Flakescore in our prototype today. In our usability testing participants noticed the little things, like a page that didn’t scroll or confusing wording in a message, highlighting the need for meticulous attention to detail.

Throughout the whole process we learned to write — about our decisions, tensions, reasoning, outcomes, and reflections. We learned that a design choice is only as good as its justification. We take this skill with us going forward, grounding our design practice in clear and persuasive communication.

Successes & Failures

Our implementation managed to capture the core ideas from our ideation process. When tested and presented to users, it got a reaction at every step, indicating that the intervention felt meaningful. It achieved the goal of reducing friction, leaving little work to the user. If anything, it may have succeeded too well at that goal, leaving less room for user engagement and rewards. Vibecoding was a double edged sword in our prototyping process; while it allowed us to develop an initial prototype very rapidly, it put us at a distance from the implementation, making it hard to understand how technical features were implemented and make necessary changes. As a result, some flows and pages were constrained by the first iteration of the AI-generated design, making it hard to imagine UIs beyond what we had. As a result, some flows have a bit of a “vibecoded look,” which could be off-putting to users.

Next Steps

Our next step would be to go back to our story map and implement all of it, even the nice to haves. We would build out each task flow with optional rabbit holes for power users, and add more rewarding features to incentivize use. We would also spend more time developing our policies, particularly around privacy, with specialized research and stakeholders with policy experience.

Future Behavior Design

Our next behavior design project will be similarly data driven, taking the tools we learned in this class (diary studies, intervention design, interviewing best practices, assumption tests) and reinforcing them with larger testing pools and standardization methods. The research we have studied around behavior and habits, as well as behavior-specific literature, will be crucial in informing how we approach the problem space. In line with our ethics discussions in class, this project showed us that ethics considerations must be present at all stages of the design process, not just an afterthought. As we work on other projects in our lives, many of which have profit or scaling goals, we will carry this approach with us.